I had a conversation with Claude last Wednesday. Spent maybe forty minutes walking it through a product launch plan: the positioning, the pricing tiers, the three segments we were targeting. Good conversation, felt productive. On Thursday morning I opened a new chat to refine the email sequence for that same launch.

"Could you tell me more about your product?" . Forty minutes of context, gone. Either corrupted or half-remembered.

If you use AI tools regularly, you've had this moment. You've had it dozens of times. And at some point you stopped being surprised and started being annoyed. You copy-paste the same background into every new conversation. You keep a Google Doc of "stuff to tell the AI." You re-explain your job, your preferences, your constraints - over and over, to the most capable technology ever built.

Here's the thing nobody tells you clearly: your AI isn't forgetting. It never knew you in the first place. What felt like a relationship was a transaction.

Every conversation is an island

The way these tools work under the hood is genuinely counterintuitive. When you chat with ChatGPT or Claude or Gemini, the model doesn't "learn" from your conversation. Nothing you say changes the model itself. It processes your messages, generates a response, and when the session ends, the next session starts from a blank slate.

Think of it like calling a support hotline where the agents don't have a CRM. You might get someone brilliant. They might solve your problem in five minutes flat. But tomorrow when you call back? Different person. No notes in the file. You start from "can I get your account number?"

There's a technical term for this. AI conversations are stateless. Each one exists independently, unconnected to anything before or after it.

Three specific things make this worse than it sounds:

The token window rolls over. Even within a single conversation, there's a limit to how much the model can "see." ChatGPT and Claude have large context windows (128K-1M tokens depending upon the model), roughly 200 pages of text for some models. But in long conversations, older messages start falling off the edge. The model isn't summarizing them or filing them away. They're just not there anymore. Ask about something from the beginning of a two-hour coding session and you might get a blank stare. Plus there is something called as context-rot. In simple words, the more information you give them, the more they hallucinate on that information. They barely remember about 20-30% of it accurately. Not ideal.

Each platform is its own silo. This one is brutal for anyone who uses more than one AI tool. And most power users do: ChatGPT for brainstorming, Claude for longer analysis, Gemini when you need something integrated with Google, Perplexity for research. Each one knows a fragment of you. None of them share notes. Your preferences in Claude don't exist in ChatGPT. That Perplexity research session about competitor pricing? Invisible to every other tool you use.

The "memory" features are thinner than they look. ChatGPT does have a memory feature: it will store facts like your name, your job, that you prefer bullet points over paragraphs. Claude has something similar. But these are stored facts, not understanding. ChatGPT remembered that I work in AI infrastructure. It did not remember the forty-minute launch strategy. That's the gap. Flat facts versus actual context from working sessions.

"But ChatGPT, Claude has memory now"

I know. I've tested it extensively. Let me tell you what it actually does.

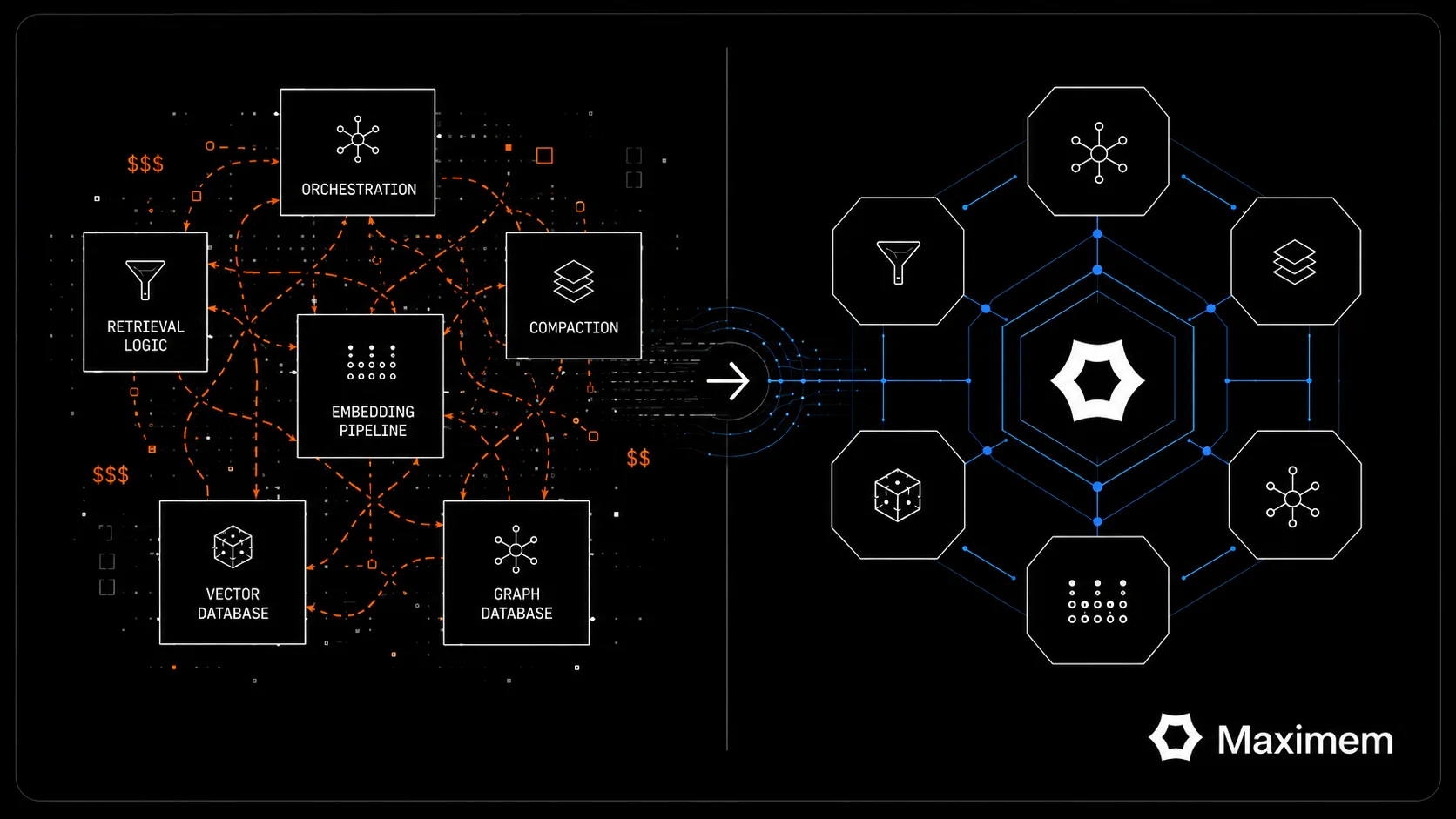

ChatGPT's memory system keeps lightweight summaries of your recent conversations, roughly your last fifteen chats. Not the AI's responses, just compressed sketches of what you typed. It also stores explicit facts about you (your name, role, preferences) that get injected into every single prompt, whether relevant or not. One researcher who dug into this found 33 stored facts about himself riding along in every conversation. His gym schedule was being fed to ChatGPT when he asked about Python debugging.

Claude takes a different approach. Instead of pre-loading summaries, it has retrieval tools it can use to search your past conversations. The operative word is "can." Claude has to decide, mid-conversation, that looking into your history would help. Sometimes it does. Sometimes it doesn't think to check. When it works, it's genuinely impressive; you get detailed context from three weeks ago. When it doesn't, you get nothing. [We did a deep dive into how each platform handles this](/blog/ai-apps-memory) and the short version is: all three major platforms are engineering their own isolated memory, and none of them talk to each other.

Gemini's situation is different again. Strong integration with your Google ecosystem; it can pull from Drive, Gmail, Calendar. But your conversations with Gemini don't inform your conversations with ChatGPT or Claude. It's another walled garden, just with Google-shaped walls.

The pattern: three platforms, three separate attempts at memory, three siloed systems that can't see past their own borders.

Bigger context windows won't fix this

I hear this one constantly. "GPT-5 will have a million-token context window, so the forgetting problem goes away." It won't, here's why:

A larger context window makes individual conversations longer. It doesn't make them connected. You can fit an entire book into a single session, sure. But close that session and open a new one tomorrow; same blank slate, no matter how many tokens the window supports.

There's also a cost issue nobody likes talking about. Context isn't free. Every token you stuff into that window costs money on the API side and computation on the inference side. The bigger the window, the more expensive every single message becomes. A 200K-token conversation with Claude Sonnet costs meaningfully more than a fresh 2K-token one. The platforms aren't going to solve this by just making windows bigger, because that doesn't scale economically.

And even within a long conversation, there's a well-documented problem researchers call "lost in the middle"; models pay more attention to information at the beginning and end of their context window and tend to miss things buried in the center. Bigger windows can actually make this worse, not better. More hay, same needle.

## What you're actually losing

Let's be concrete about the cost. Cottrill Research reported in 2025 that nearly half of knowledge workers spend one to five hours every day searching for information they've already accessed. UC Irvine's research on interruptions found it takes about 23 minutes to get back in the zone after each break in focus. Every time you re-explain your role, your project constraints, your communication preferences; that's an interruption. A self-inflicted one, caused by tools that should already know this.

We tracked our own stats when we helped users create their AI Year End Wrapped. Users on average had revised their prompt in over half of all conversations. Half the time, they're teaching the AI something it should already know.

Multiply this by every AI tool you use. Multiply by every working day. The cumulative friction is enormous, and it's invisible because it's distributed across hundreds of small moments. No single re-explanation feels like a big deal. But together, they eat hours every week.

The subtler cost is harder to measure: the good conversations that never happen because you don't bother setting up the context. You wanted to ask Claude to refine yesterday's strategy. But that would mean re-pasting the strategy, re-explaining the market context, re-describing the team dynamics. So you just... don't. You work from the draft you already have, even though a fresh pass with full context might have caught the flaw in your pricing model.

What you can do about it right now

Three options, escalating in effectiveness.

The manual workaround. Keep a document: some people use Notion, some use a simple text file: with your standard context: role, current projects, communication preferences, key constraints. Paste it at the top of every new conversation. It works. It's tedious. It doesn't transfer between platforms. You become your own memory system, which, let's be honest, defeats a lot of the purpose of using AI in the first place.

Use the platform-native memory features. Turn on ChatGPT's memory. Use Claude Projects to store background documents. Set up Gemini's Google integrations. This gets you further. But each one only works inside its own platform. If you're a multi-platform user; and the data suggests most regular AI users are, you're still maintaining separate contexts everywhere.

Use a cross-app memory layer. This is a newer category. Tools that sit above all your AI platforms and maintain one unified memory across everything. A Chrome extension, typically, that captures context from your conversations and makes it available everywhere you go. What you discussed in ChatGPT on Monday is available when you open Claude on Wednesday.

We built Vity for exactly this reason. It's a free Chrome extension that syncs your memory across ChatGPT, Claude, Gemini, Perplexity, DeepSeek, Grok; ten platforms currently. It captures context from your conversations (with your consent, always), then proactively surfaces relevant memories as you type in any supported app. You approve what gets included. Nothing gets injected without you seeing it first. The whole thing runs on an encrypted vault that we can't read even if we wanted to.

But my bias is showing. If you want an apples-to-apples look at how Vity stacks up against other options in this category, we published a detailed comparison of Vity, Mem0, and Supermemory that covers setup, platform support, privacy architecture, and memory depth. Read it and decide for yourself.

The forgetting problem isn't going away on its own

Here's the uncomfortable truth. Smarter models don't fix statelessness. GPT-5 will be smarter than GPT-4. It will still start every conversation from zero. Claude's next version will be more capable. It still won't know what you told the current version last Tuesday.

The forgetting isn't a technical limitation that next year's model update solves. It's an architectural choice. Each conversation is designed to be independent. That's actually useful for privacy and safety; it means your conversations don't bleed into each other or into other people's experiences. But it also means no AI tool, on its own, will ever "remember you" the way a colleague does.

Memory has to be solved as a separate layer. Something that sits above the models, captures what matters, and brings it back when it's relevant. Whether that's Vity, or one of the alternatives, or something you build yourself with a text file and discipline; the core insight is the same.

Your AI tools are not going to start remembering you. You have to give them a memory.

---

Frequently Asked Questions

Why does ChatGPT forget everything between conversations?

ChatGPT conversations are stateless by design. Each new chat starts with no knowledge of previous sessions. ChatGPT does have a built-in memory feature that stores basic facts about you, but it only retains lightweight summaries of recent chats and doesn't carry over the detailed context from working sessions.

Can AI remember things between conversations?

Not natively. ChatGPT, Claude, and Gemini each have limited memory features that work within their own platform, but none of them share memory across platforms. Cross-app memory tools like Vity solve this by maintaining a single memory layer that works across all major AI platforms.

Does Claude have memory like ChatGPT?

Claude has a different approach. Instead of pre-loading conversation summaries, Claude uses retrieval tools that can search your past conversations on demand. It offers a larger context window (upto 1 M tokens) for longer sessions, but like ChatGPT, its memory only works within Claude itself.

How do I make AI remember my preferences?

Three options: (1) manually paste your preferences into every new chat, (2) use built-in features like ChatGPT Memory or Claude Projects within each platform, or (3) use a cross-app memory extension that syncs your preferences across all AI platforms automatically.

What is cross-app AI memory?

Cross-app AI memory is a layer that captures context from your conversations across multiple AI platforms (ChatGPT, Claude, Gemini, Perplexity, etc.) and makes it available everywhere. Instead of each AI tool having its own isolated memory, you have one unified memory that follows you. Learn more about [how cross-app memory works](/cross-app-memory).

Is there a way to sync memory across ChatGPT and Claude?

Not through the platforms themselves: ChatGPT and Claude don't share data with each other. Cross-app memory tools bridge this gap. Vity's Chrome extension (which also works with OpenClaw) captures context from both platforms and surfaces relevant memories in either one, so preferences and project context travel with you.