I was sitting across from a pre-seed founder last month who'd just built their first agent. Real excitement in the room. They'd stitched together a RAG system, wired it into Claude Sonnet 4.6, and suddenly it was handling customer support tickets. Worked great for the proof of concept.

Then they looked at their Anthropic bill.

A single query on their old RAG setup cost roughly $0.002. The same query through the agent? Closer to $0.02. The agent was asking questions differently. Checking three tools. Reformulating queries. The context window was exploding. They'd been staring at pricing pages and doing napkin math, but the actual cost was ten times worse. And they were terrified about what would happen when conversations got longer, or they got more users, or the agent had to juggle multiple concurrent tasks.

I get it. Most founders think about agent costs wrong.

They see the per-token price on the Anthropic or OpenAI page (Claude Sonnet 4.6 at $3 input and $15 output per million tokens; GPT-5.1 sitting around $1/M tokens across the board). They multiply that by their estimated token volume. They do a little mental math. They convince themselves it'll scale.

That is not how this works. The actual cost stack has layers. Most people never see them on their billing dashboard until it's too late.

The Three Direct Cost Layers

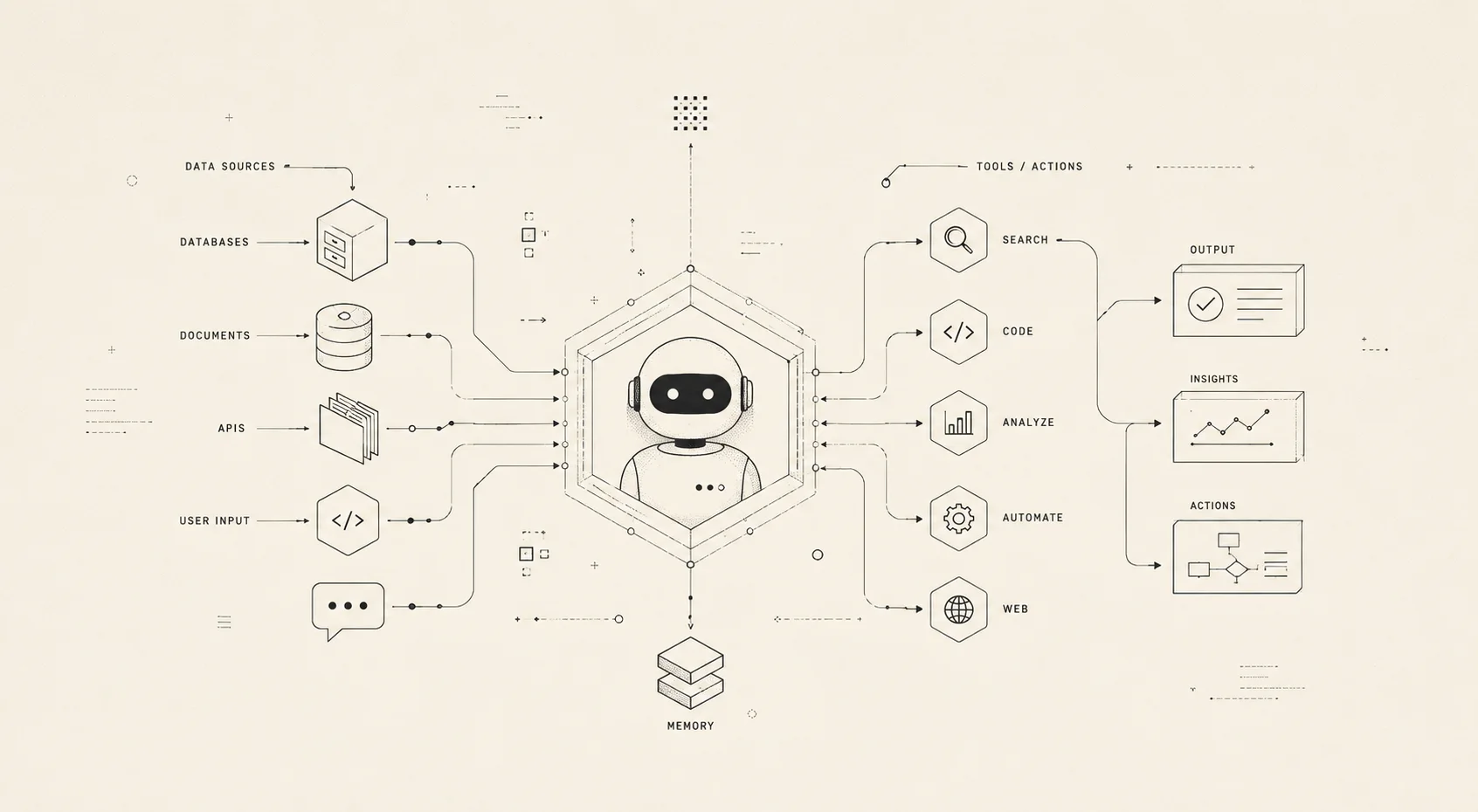

Agent costs sit in a stack. The first layer is the obvious one.

Layer 1: Token Costs (the one everyone talks about)

Output tokens are genuinely expensive. You're paying 3 to 15 times more for each token your model generates than for each token you send it. That's the math. If you're using Sonnet, output tokens cost five times what input tokens cost. Obvious enough. But here's what kills founders: they forget to count the token tax that happens before the user ever types anything.

Your agent has 15 tools. It's connected to three MCP servers. You've got a system prompt that's 2,000 words long. Every single request to your LLM repeats all of that. Tool definitions. MCP schemas. System instructions. The user's actual query is often smaller than the infrastructure wrapping around it.

A typical agentic system burns 2,000 to 5,000 tokens on definitions alone. Every request. Before anything useful happens.

Now multiply by daily volume. An agent running 500 times a day has already spent $15 on definitions by lunch.

Layer 2: Context Accumulation Costs (the one that sneaks up)

Conversations don't stay short. Users come back. They ask follow-ups. They expect the agent to remember what you talked about yesterday.

So the agent resends the entire conversation history with each new turn. Layer 1 was expensive. This layer grows exponentially in conversation length.

By the time you've accumulated 50,000 tokens of conversation history, the majority of your bill isn't generating new answers anymore. It's re-reading old messages. The model is spending computational effort on context it's already seen. You're paying to remind it of conversations it just had. This is part of [the hidden cost of context decay](/blog/insights/expensively-forgetful) that most teams don't budget for.

The math looks like this: total cost per conversation equals output tokens multiplied by number of calls plus input price multiplied by growing context length multiplied by number of calls. The second term is the killer. It grows faster than you expect because context length and call count increase together. Month two, you're not just paying twice as much. You're paying five times as much.

Layer 3: Infrastructure and Engineering Costs (the invisible layer)

This is the bill that doesn't show up in the API console.

You need a vector database to manage embeddings. You need compute infrastructure to run that embedding pipeline. You probably need a graph database to handle entity relationships. You need engineers maintaining all of it. Not one engineer. One engineer to keep the lights on, then one more to maintain system accuracy, then one more to improve coverage. Then one of your senior engineers gets pulled off core product to argue about whether you should use dense retrieval or hybrid search.

Extending a model with retrieval capability costs somewhere between $750K and $1M at mid-market engineering rates. That gets you 2 to 3 dedicated engineers over 6 to 9 months.

Your LLM API bill might be $10K a month. Your infrastructure costs might be $30K. Your engineering costs might be $40K. And most founders are only watching the first number.

The Four Hidden Costs Nobody Budgets For

These don't show up anywhere on your billing dashboard. And yet they often exceed everything above combined.

Cost 1: Missed Accuracy

When your agent gives a wrong answer because the context was stale or incomplete, you don't get a line item for it. The billing system doesn't flag it. You get something worse: silence. You lose the customer interaction. You lose the upsell moment. You lose the ticket that was supposed to be deflected.

The opportunity cost here is silent, compounding, and absolutely massive. Most teams discover this six months in when they realize their agent is technically "working" but nobody's using it because the quality is mediocre. The responses repeat questions the user just answered. The context is irrelevant. The user stops trusting it.

Fixing this requires going back to your cost stack and reconsidering which layer is actually the problem. Do you need better retrieval? A different model? More context? The team splits their focus. Product waits. The numbers deteriorate.

Cost 2: Wrong Model for the Wrong Task

Most teams make one decision and live with it. Pick Claude Sonnet or Opus. Route everything through it. Call it a day.

But context management (summarization, extraction, classification) has a completely different compute profile than reasoning. Using your most expensive model for triage work is like hiring a surgeon to arrange your desk. Using a cheap model for complex reasoning is like using a Swiss Army knife for actual surgery.

Teams that get this right use model routing. Cheaper models for classification. Expensive models only for the tasks that actually need them. This is almost never the default behavior. The default is "let's just use the best model for everything and optimize later."

Later never comes.

Cost 3: Poor Customer Experience

Subpar context leads to agents that repeat questions, forget preferences, or surface irrelevant history. Users don't file bugs for this. They don't complain in your feedback form. They just stop using the product over a period of time.

Churn is harder to measure than tokens. Way harder. But it's the real cost.

Cost 4: Observability and Continuous Improvement

You cannot improve what you cannot measure. Most teams don't have visibility into what was retrieved, whether it was relevant, whether the agent actually used it, or why it didn't work.

Building observability into your context pipeline is a separate engineering effort. A real one. It takes months. And without it, you're flying blind. You're spending all this money on tokens and infrastructure without any actual signal about whether the context is helping.

You're optimizing in the dark.

Why the Math Gets Ugly at Scale

Let's walk through a concrete scenario. Imagine your agent launches in Month 1.

You're handling 100 conversations a day. Average conversation length is 5 turns. That's straightforward. The math works. You're spending maybe $100 a day on tokens.

By Month 3, adoption has picked up. You're handling 500 conversations a day now. Users are getting comfortable with the agent. They're asking more questions. Average conversation is now 8 turns.

By Month 6, you've gone aggressive on go-to-market. 1,000 conversations a day. Power users are starting to form. They have longer conversations. 12 turns average.

Your costs don't 3x. They don't even 5x.

They explode.

Why? Because longer conversations mean more context per turn. More users mean more unique contexts to maintain and retrieve. If you're running multi-agent coordination (which you probably are if you've been around long enough to worry about costs), each additional agent step burns 15 times more tokens than a single-agent chat because now you're coordinating between models. Each tool call generates roughly a 100:1 input-to-output ratio because you're mostly sending context and receiving small structured outputs.

Throw in the "lost in the middle" problem where language models effectively ignore 76 to 82 percent of content in the middle of their context window. You're paying for tokens that are actively degrading your performance. You're paying to make things worse. Remember, [why bigger context windows aren't the answer](/blog/insights/context-windows-not-memory) when your actual problem is retrieval strategy.

At scale, costs become non-linear. They become invisible until you've already overcommitted.

A Mental Model for Agent Cost Decisions

You need a framework. Not the kind you find in a spreadsheet. The kind that changes how you think about the problem.

Question 1: Is your agent single-session or multi-session?

Single-session means the conversation starts fresh and ends within one window. Your context window costs dominate. Optimize for prompt efficiency. Every token counts.

Multi-session means the agent is talking to the same user across multiple days. Memory infrastructure costs dominate. Optimize for intelligent retrieval, not just shoving everything into context. Understanding [what the memory layer alone costs](/blog/insights/real-cost-diy-agent-memory) is critical before you build.

These are fundamentally different optimization problems. Most teams treat them the same way.

Question 2: What is your cost per successful outcome?

Not cost per token. Nobody cares about that. Cost per resolved ticket. Cost per completed task. Cost per satisfied user.

If your agent saves 20 minutes of human work but costs $0.50 per interaction, that is unambiguously cheap. If your agent costs $1.00 per interaction and saves nobody any time, that is not cheap at any price.

This is the question that separates engineering from business. Get it right and you suddenly know what to optimize for.

Question 3: Which cost is growing the fastest as you scale?

Look at your trends week over week. Find the line that's steepest. That's where you focus.

Usually it's one of four things: conversation history bloat because you're keeping too much context, redundant tool calls because your prompts are inefficient, re-processing unchanged context because you're not caching properly, or engineering hours spent firefighting context quality because you built the retrieval system wrong.

Find that line. That's your problem.

Three Levers That Actually Work

Most teams don't use any of them.

Lever 1: Model Routing

Use GPT-5.1 for triage and classification. Use Claude Opus only for the reasoning tasks that justify the expense. This feels obvious when you write it down. Most teams put their best model on everything.

Lever 2: Strategic Context Resets

This feels wrong but it's mathematically sound. Reestablishing context from memory is often cheaper than maintaining an ever-growing conversation. You pay a one-time cost to rebuild context. You avoid the ongoing tax of re-reading it every turn.

Lever 3: Prompt Caching

Anthropic offers up to 90 percent cost reduction on cached tokens. OpenAI offers 50 percent discounts via their batch API. Most teams don't use either because they haven't read the fine print or they think it's too complex to integrate. It's not.

But here's the uncomfortable truth. These three levers are the tip of the iceberg.

Getting agent costs right depends almost entirely on how you manage, organize, and fetch context tokens at runtime. The retrieval strategy. The compaction logic. The freshness heuristics. The allocation across your context window. This is a complex engineering problem in its own right.

And this is where the biggest savings hide.

The exe.dev blog post about quadratic cost curves nails this perfectly. Most founders are optimizing the obvious layer while the actual cost explosion happens in context management. You can tweak your prompt all day. You'll move the dial 10 to 15 percent. But if your retrieval strategy is wrong, you're paying 10x more than you need to.

Back to the Founder

I told that pre-seed founder what I'm telling you now.

The answer isn't "use a cheaper model." The answer isn't "optimize your token count." The answer is: understand your cost stack before you optimize. Figure out which layer is actually growing. Most founders are optimizing the wrong layer and wondering why their costs won't budge.

Start with the question: what is my cost per successful outcome? If that number is good, your problem isn't cost. Your problem is growth. And that's a good problem to have.

If your cost per outcome is bad, then figure out why. Is it your model choice? Your context window size? Your retrieval strategy? Your infrastructure?

The moment you know which layer is actually killing you, the optimization becomes obvious.

And if context management is where you're bleeding money, that's what we built Synap for at Maximem Tech. Visit maximem.ai/synap to see how better context management collapses your token costs at scale. The complexity of getting this right deserves better than ad-hoc solutions.

The rest is just math.