Most AI agent teams don't have evals. They ship code, watch logs in Slack and fix things when users complain. It is a reactive cycle. This works until a subtle quality drift creeps in over weeks, adoption flatlines and nobody can actually explain why their agent stopped working as advertised.

The failure mode is a quiet degradation instead of a crash.

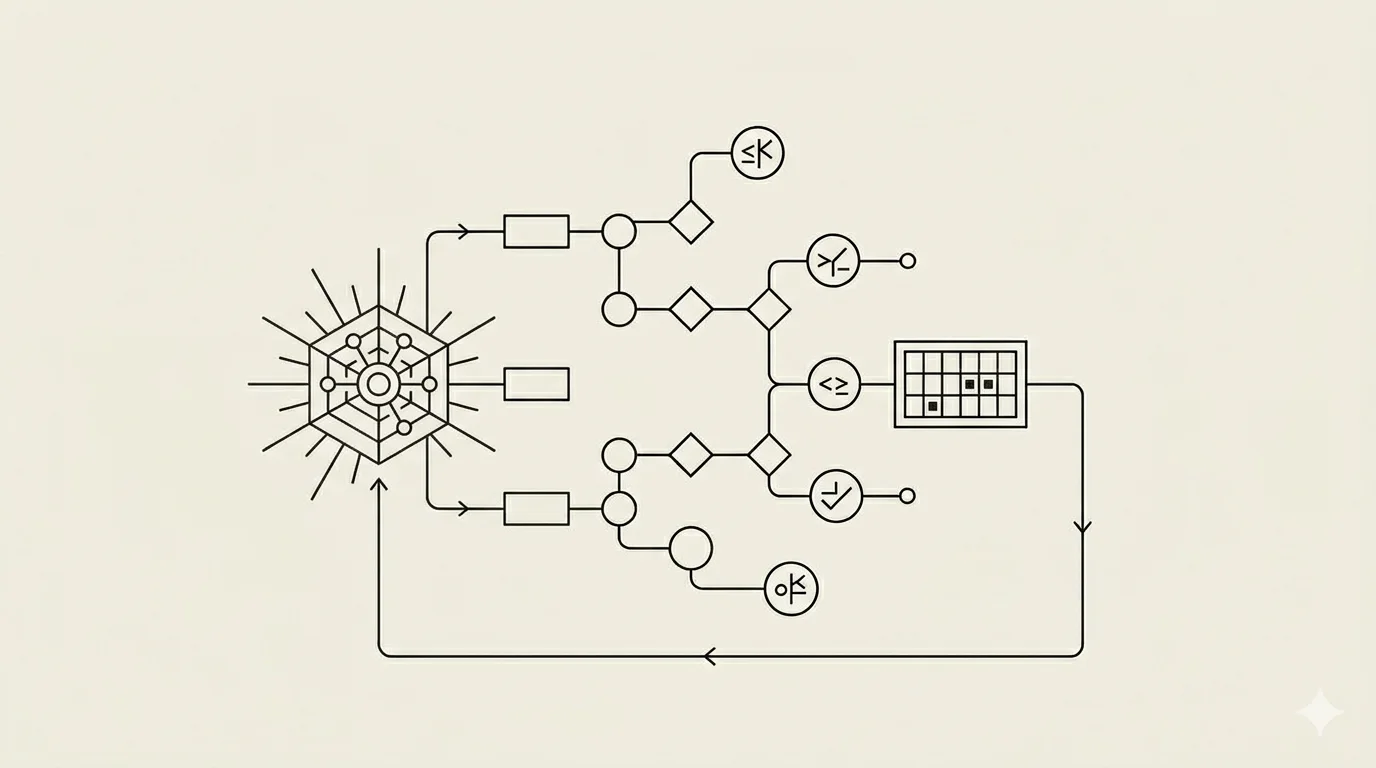

Evals catch that drift. They are how you notice something went wrong before your customer support team does. But here's the thing about agent evals that makes them different from the model evals you might already know about: agents aren't just input-output machines. They plan. They call tools. They observe what those tools return. They adjust and call more tools. They fail gracefully (or fail messily). Measuring whether the final answer is correct? It is necessary but insufficient.

Following is what the convergence looks like between what Anthropic published, what AWS is recommending, and what the open-source community has figured out. It's not theoretical. It's what actually works.

Why Agent Evals Are Different From Model Evals

Model evals are simple in most cases: you give the model an input, it produces an output, you check if that output is correct. Input to output. One step. Done.

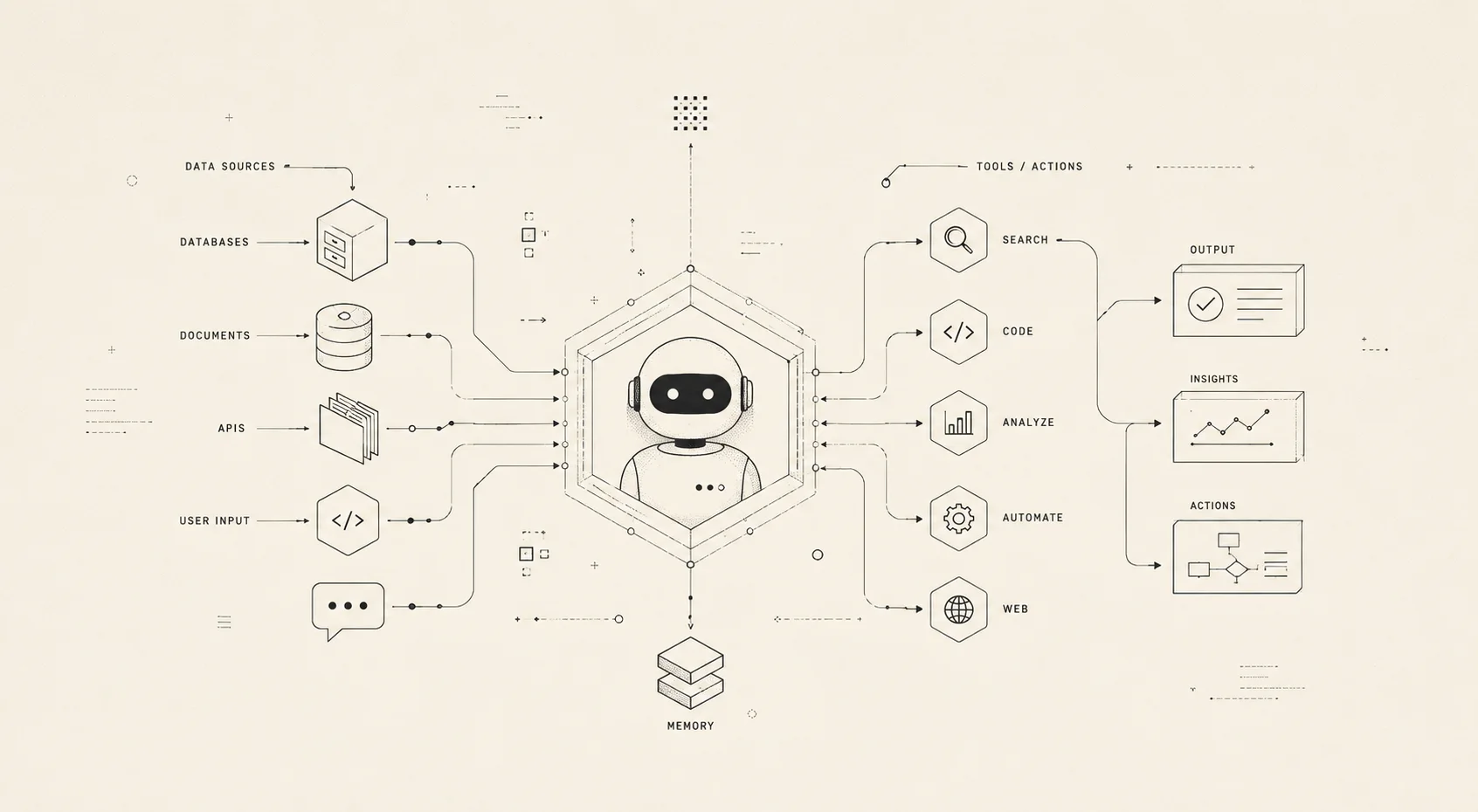

Agent evals are doing something fundamentally different. The agent plans, calls tools, observes results, adjusts, calls more tools, and eventually produces an output. The final answer matters, sure. But the path matters equally. Did it choose the right tools? Did it call them in a sensible order? When a tool failed, did it recover or just give up? How much did this cost in tokens? Did it stay within its scope or do something it shouldn't have?

Here is the problem with ignoring the path: an agent can arrive at the correct answer through bad reasoning. It got lucky. It made three unnecessary tool calls first. It misinterpreted the third tool's output and accidentally called the right thing anyway. This fragility pattern doesn't show up if you only check the final answer. You will think everything is fine until something shifts slightly and the whole thing falls apart.

Multi-step failure propagation is real. An error in step 2 corrupts steps 3 through 10. A single metric at the end misses the root cause entirely.

And there's the stochasticity thing: run the same agent on the same task three times, you get three different results. LLMs aren't deterministic. If you run your eval suite once, you're essentially rolling dice. Proper agent evals require running multiple trials per task and looking at consistency. This isn't paranoia. It's the baseline expectation.

Let me use the vocabulary that Anthropic and the community have converged on, because it makes everything clearer. A "task" is one test case with defined inputs and success criteria. A "trial" is one attempt at that task (you run multiple trials per task). A "transcript" is the complete record of what happened during a trial: every output, every tool call, every reasoning step. And the "outcome" is the final state when the trial ends.

That vocabulary matters because it changes how you think about problems.

Five Dimensions, Not One Metric

This is where most teams go wrong. They pick a single metric, usually accuracy, and call it done.Don't do that.

There are five distinct dimensions you need to evaluate, each measuring something different, each exposing different failure modes. Some of them don't directly correlate with each other either, which is the tricky part.

Correctness is the obvious one. Did the agent produce the right answer? Did it complete the task correctly? You're measuring accuracy versus ground truth or task completion rate. Most teams measure this. Most teams only measure this. It's necessary but not sufficient.

Tool Use Quality is the second dimension. Did the agent choose the right tools? Did it call them in a sensible order? Did it interpret the outputs correctly? What you're really measuring is tool selection accuracy and tool call efficiency. How many calls did it take to solve the problem? Industry research is messy here, but agents are averaging 50 tool calls per complex task. Some of that is fundamental to the problem. Some of it is wasted. Efficiency matters when you're at scale. Evaluating agent skills properly helps you understand where the waste is coming from.

Cost Efficiency matters because tokens cost. How many tokens did this task consume? Is that cost justified by the outcome? Multi-agent systems use 15x more tokens than single model conversations, by the way. That's not a variable cost in your spreadsheet. That directly affects whether your product is sustainable. Track tokens per successful completion. Understanding the agent cost stack helps you make smarter trade-offs here.

Latency is where I watch teams deprioritize things until it's too late. Time from user input to complete response. For interactive agents, the time to first token often matters more than total time. For voice agents, if you're not at sub-800 milliseconds, users notice the pause and lose confidence in the system. You can have the perfect answer arrive too slowly to be useful.

Safety and Alignment is the one that keeps me up. Did the agent avoid harmful outputs? Did it stay within its authorized scope? Did it resist adversarial inputs? I'm talking about jailbreak resistance, out-of-scope detection rates. The research here is genuinely alarming: agent failure rates in adversarial conditions range from 40 to 80 percent. This dimension is not optional. It's critical infrastructure.

Building Your Eval Pipeline: The Practical Steps

I am going to walk you through this linearly because it's actually the sequence that works.

Define 20 to 50 test cases. This is the foundation and people rush through it. You need happy paths (tasks your agent should absolutely nail). You need edge cases (tasks that break things). You need production failures you have already seen. For each case, write down the input, the expected outcome, and the success criteria. Make them specific. "The agent should work" is too vague. "The agent should successfully retrieve customer orders from the past 30 days and format them as a CSV with columns for order ID, date, and total" is something you can actually measure. Start small. Twenty cases that matter are better than two hundred cases that nobody can articulate.

Pick your framework. There are quite a few options now, and the ecosystem keeps shifting. DeepEval is good for general agent testing and uses a pytest-like interface with 20+ metrics built in. Ragas is specialized for RAG-heavy agents where retrieval quality is the bottleneck. Promptfoo handles prompt regression testing and runs locally, so nothing leaves your infrastructure. Braintrust bridges evals with production monitoring. Opik, from Comet, does multi-framework observability and supports LangChain, CrewAI, and AutoGen. Pick based on what your agent architecture looks like, not on prestige.

Implement and run. Start with 2-3 automated metrics. Correctness. Cost. Latency. That's it. Run multiple trials per task (3 to 5 minimum) to account for nondeterminism. Log the full transcript for every trial. You'll need those transcripts when you're debugging why something failed. Before you make any changes to your agent, baseline what you have right now. This gives you a reference point.

Add human review. Automated metrics catch maybe 80 percent of issues. The remaining 20 percent needs human eyes. Review 10 to 20 percent of the failed transcripts manually. Look for reasoning errors that your metrics don't capture. Look for subtle quality issues. Look for tone or style drift. AWS calls this the "crawl-walk-run" pattern: start with internal review, then expand the review pool as your confidence grows.

Integrate into CI/CD. This is the differentiator. Most guides skip this. Most teams implement evals as a manual process that runs sometimes and gets ignored. Build the eval suite into your continuous integration pipeline. Run evals on every code change and every prompt change. Fail the build if key metrics regress beyond your threshold. Store historical results to track trends. The pattern is straightforward: Code change -> Run eval suite (this takes 5 to 10 minutes) -> Compare against baseline -> Pass or block. This last step is what separates teams that actually ship reliably from teams that have an eval script in their GitHub that nobody runs.

The Mistakes Everyone Makes (And How to Avoid Them)

Three error-patterns I have seen a lot of agent builders make:

Evaluating only the final answer. You ignore the transcript, which means you'll never find root causes. An agent can produce the right answer through the wrong process. It made five unnecessary tool calls first. It misinterpreted something and accidentally recovered. It got lucky. This creates fragile systems that break unexpectedly. Always log and review the full transcript.

Running evals once at launch. Agents drift. Model updates happen. Your tool's API changes its behavior. Evals have to run continuously. Run them on every change. Make it automatic. The moment you treat evals as a one-time activity, you've already lost visibility into what's happening.

Measuring everything and acting on nothing. I've seen dashboards with 50 metrics on them. Nobody reads them. Pick 3 to 5 metrics that actually matter to your product. Set thresholds. When a threshold is breached, automate a response. Send a Slack alert. Block the deployment. Don't create surveillance theater.

Start Tomorrow

Evals aren't optional infrastructure. They're the difference between "our agent works" and "our agent works reliably." The best teams treat their eval suite the way backend engineers treat their test suite: it runs on every change, it catches regressions before users ever see them, and it gives everyone confidence to ship fast.

Here's what I'd do if I were starting from scratch tomorrow: write 20 test cases for the core tasks your agent should handle. Pick whichever framework feels least tedious for your setup. Run the eval suite locally first, verify it works, then integrate it into CI/CD. Everything else is iteration.

Good evals require good context, by the way. If your memory layer is serving up stale or irrelevant context, your eval metrics will catch that hard. That's a feature, not a bug. If you're new to eval terminology, the AI glossary covers the foundational concepts. Ship.