File-driven context management has been the rage in the last few days, especially since Claude CoWork launched. It made me curious and I tried a few things.

I ran an experiment across 5 distinct domains: from Python code to scientific papers. I ingested 50,000 documents from popular datasets and fired 5,000 queries at them.

The goal? To find out what all the noise about file-based search is. And if it is even real!

I started this expecting Vector search to crush the benchmarks across the board. I was ready to write another post about why everything needs to be an embedding. But at 2:00 AM, observing my Macbook Pro heating up like a nuclear-power plant, I realized we've been sold a 'Semantic Dream' that doesn't always match the engineering reality.

The results are, well, revealing.

Real-time Embedding generation is a TAX

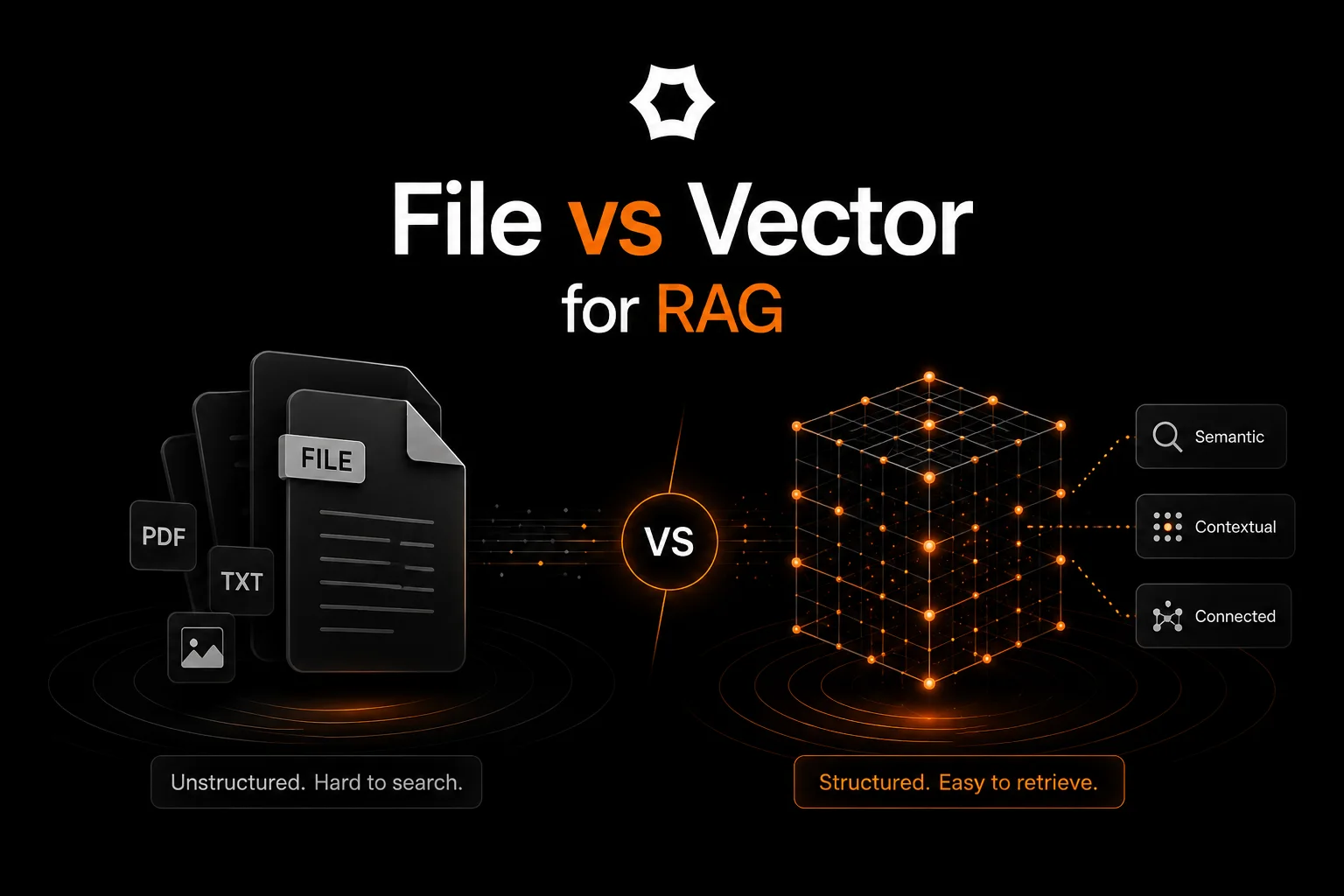

When you move from traditional File Search (using a tool like Tantivy, which finds exact words) to Vector Search (using a tool like Chroma, which finds similar concepts), you pay a heavy price due to the compute involved in embedding generation and the associated time.

The Takeaway: If your AI agent needs to "read" a new GitHub repository or a 100-page PDF on the fly, File Search is instant. Vector Search is a coffee break. But if it needs to read something now and act on it later, Vector tax isn’t that big of a deal.

But Vector Trumps File in a lot of cases

We measured accuracy using MRR (Mean Reciprocal Rank). Think of MRR as a "Direct Hit" score: a 1.0 means the perfect answer was the very first result.

We tested five datasets to see where the "Vector Tax" actually buys you better results:

- CodeXGlue (Code): Translating natural language (NL) to Python code.

- MS MARCO (Web): Real-world Bing search queries.

- SQuAD (Facts): Wikipedia-based question answering.

- HotpotQA (Reasoning): Questions that require linking two pieces of info.

- SciQ (Science): Hard science exam questions (Physics, Biology).

Scenario A: The Semantic Gap (Where Vectors Win)

When a user searches for "sort a list" but the code says bubble_sort, keyword search fails. Vectors bridge this gap by understanding the intent. If your users are non-technical or vague, you must pay the tax.

Scenario B: The Precision Gap (Where Files Win)

In Science or complex Reasoning, words aren't just "vibes"—they are specific entities. "Mitochondria" isn't just a "similar concept" to a cell; it's a specific key. Vector search often "drifts" toward things that sound similar but are factually wrong. Keyword search locks onto the target with surgical precision.

Take HotpotQA as the perfect example. It's a multi-hop reasoning dataset (e.g., 'Which magazine started first, A or B?'). Even though the benchmark marked a 'tie,' I noticed a disturbing trend: Vector search would often find the 'correct' document for Magazine A, but completely ignore the 'bridge' document for Magazine B because it wasn't 'semantically similar' enough to the query. Finding the answer isn't the same as having enough context to prove it. This is where Retrieval ends and Reasoning Sufficiency begins.

So, should we just go hybrid?

Yes, but, it won’t be enough!

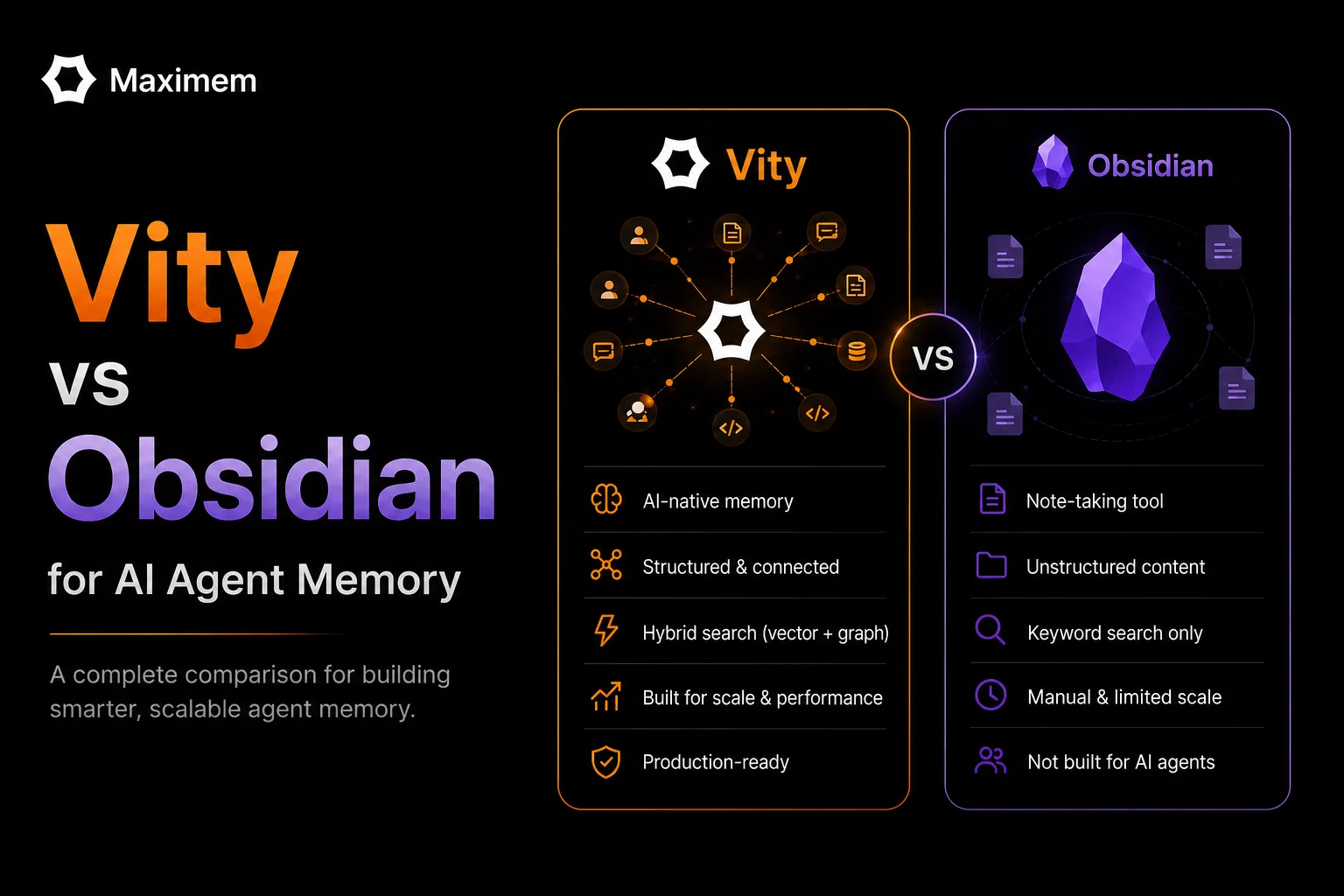

Building a 'good enough' Hybrid Search is a weekend project. But managing the lifecycle of a thought is a full-time infrastructure job. True context management requires more than just mixing keywords and vectors; it requires an Adaptive Architecture:

- The Ephemeral Buffer: Ingesting data in milliseconds so the agent can 'remember' a fact it learned ten seconds ago without waiting for a slow vector re-index.

- Lossless Compaction: Intelligently summarizing 100 retrieved shards into a 2k-token 'working memory' that doesn't lose the surgical details (like that 'Mitochondria' key).

- The Semantic Graph: Mapping the implicit relationships: how a Slack thread from Tuesday relates to a bug in AuthService.ts on Friday: even when the words share zero similarity.

- Context Graph: Capturing "Decision Traces" and organizational tribal knowledge. This isn't just data; it's a map of how your company thinks, delivered to your agent in <400ms.

You shouldn't have to build the plumbing for multi-tenant memory or context-graphs. You should be building the agent.

Stop Building Plumbing, Start Building Agents

The "Do-It-Yourself" approach to AI context-management has a steep learning curve and is killing productivity. We see brilliant teams spending 80% of their time debugging how to provide the right context; rather than why their Agent actually failed the task.

At Maximem, we are building Synap because for someone building AI Agents, context-management isn’t the core competence.

We handle the "Vector Tax," the context compaction, the semantic graph, the context graphs and much more. We manage the infrastructure of "remembering" so you can focus on the hard part: the lifecycle of a thought.

The war isn't over who has the most data. It’s over who manages context the best.

--

If you are interested in looking at the raw outputs, please DM me.