Here is a workflow we see all the time: you hop into ChatGPT to brainstorm a product strategy. Minutes later, you are on Claude to draft a build plan. Next day, you are using Perplexity because you need competitor pricing and Claude isn’t great at live research. Three AI assistants. Three totally separate conversations. Each one blank.

If that sounds familiar, you are not alone. Most power users bounce between multiple LLMs daily and every single one of them operates in its own silo. Fragmented memory about you, your projects, your tasks. Even within a single platform, memory is spotty at best. It decides randomly about what context of yours it needs to remember and fetch. Often, not relevant to the current task. And then you reiterate. You burn more time re-explaining things than actually getting work done. In fact, when we helped people create their Year End Wrapped, we noticed from stats that users on an average had revised the prompt in at least 50%+ conversations.

There is a bitter irony here. Back in 1885, Hermann Ebbinghaus ran his now-famous memory experiments and showed we forget roughly 70% of new information within a day. A 2015 replication in PLOS ONE confirmed the numbers still hold. We built AI partly to patch that biological gap - yet the AI apps themselves can’t remember what you told them last week.

And the cost is real. Cottrill Research reported in 2025 that nearly half of knowledge workers spend one to five hours every day looking for information they had already accessed. The UC Irvine research on interruptions confirms what we all experience - it takes about 23 minutes to get back in the zone after each break in focus.

So we got curious: what are ChatGPT, Claude, and OpenClaw actually doing under the hood when it comes to remembering you? And more importantly - what would it look like if memory genuinely worked the way your brain does, across every tool and conversation?

How ChatGPT Remembers You

ChatGPT’s memory is actually four things duct-taped together and it is simpler than you would guess.

There is a session metadata layer that grabs your device type, timezone, subscription tier, and how you have been using the product lately. It helps shape responses, sure, but nothing here survives past the current session. Close your laptop and poof - it is gone.

The piece most people think of as “memory” is a separate system for storing long-term facts. Your name. Your job. That you are vegetarian on Thursdays. That you prefer dark mode. One researcher who dug into this found 33 stored facts about himself - career aspirations, gym schedule, the whole picture. These facts ride along in every single prompt ChatGPT generates, whether they’re relevant to the current question or not.

Here’s where it gets surprising. For cross-chat continuity - the “how does it know what I was doing yesterday” question - There is no RAG pipeline. No vector search. No embeddings. ChatGPT just keeps lightweight summaries of your last ~15 conversations. Summaries of what you typed, specifically, not the AI’s answers. it is more of a vibes-based sketch of your recent interests than a proper record.

Then the current conversation gets a normal sliding window - full messages until you hit the token cap, at which point older stuff falls off.

This setup is fast. Pre-computed, always ready, zero retrieval latency. But lossy summaries are, well, lossy. If your thinking on a project shifted subtly over three conversations last week, that nuance probably didn’t make the digest.

How Claude Remembers You

Claude does something different, and honestly, it is a more interesting engineering bet.

The basics are similar - There is a set of stored facts about you that gets included in each prompt. Your name, your preferences, your role. One nice touch: Claude will quietly update these in the background based on how your conversations go, rather than only storing things you explicitly tell it to remember.

The current conversation window is also familiar territory, just bigger. Claude reports a context budget around 190,000 tokens, which gives it meaningfully more room to hold an ongoing conversation without summarizing things away.

Where Claude takes a gamble is on historical context. Instead of pre-loading a summary of your recent chats (the ChatGPT approach), Claude has retrieval tools it can use - keyword search across past conversations, time-based lookups, that kind of thing. The operative word is “can.” These aren’t fired on every turn. Claude has to decide, mid-conversation, that digging into your history would be helpful. And then it has to execute the search well.

When it works, you get something ChatGPT can’t offer: actual detailed context from a conversation three weeks ago, not a compressed summary of it. When it doesn’t - because Claude didn’t think to look, or searched for the wrong thing - you get nothing. It is a high-ceiling, low-floor kind of system.

ChatGPT vs. Claude: Memory at a Glance

| Capability | ChatGPT | Claude |

| :---- | :---- | :---- |

| Long-term user facts | Yes, injected every prompt | Yes, injected every prompt |

| Cross-chat continuity | Pre-computed summaries (\~15 recent) | On-demand search tools |

| Current session | Sliding window (full messages) | Rolling window (\~190K tokens) |

| Retrieval approach | Always inject summaries | Retrieve only when relevant |

| Cross-platform memory | No, ChatGPT only | No, Claude only |

| Bookmark / web context | No | No |

| Intelligent forgetting | No | No |

How OpenClaw Remembers You

Then there is OpenClaw, the open-source darling (150K+ GitHub stars, formerly called Clawdbot). Totally different philosophy. Nothing runs in the cloud. Your data stays on your machine.

What does memory look like when you own the whole stack? Markdown files. Literally. There is a daily log the agent scribbles to throughout the day, plus a long-term MEMORY.md

file you can open in any text editor. When the agent needs to recall something, it runs two searches in parallel - one semantic (vector similarity, for fuzzy conceptual matches) and one keyword-based (BM25, for when you need to find “POSTGRES_URL” and not “that database thing”). The weighting is 70/30, semantic-first.

There is a clever bit around context limits, too. When a long conversation approaches the token ceiling, OpenClaw runs compaction - but before it does, it flushes important details to disk. So even if the summarizer loses nuance, the raw facts survive on your hard drive. We wish more systems thought this carefully about data preservation.

The obvious limitation: this memory lives and dies inside OpenClaw. If you are also a ChatGPT and Claude user - and most power users are - those worlds never touch.

The Gap None of Them Close

By now, you would have observed the pattern - Three platforms, three walled gardens. ChatGPT remembers you in ChatGPT. Claude remembers you in Claude. OpenClaw remembers you in OpenClaw.

Every fresh chat starts with a siloed memory of you and your work. What’s the project? What were the constraints again? Didn’t you already decide on that pricing model? You re-explain. You re-paste. You lose twenty minutes of momentum, again.

The fix isn’t better recall inside any one tool. it is a memory layer that spans all of them.

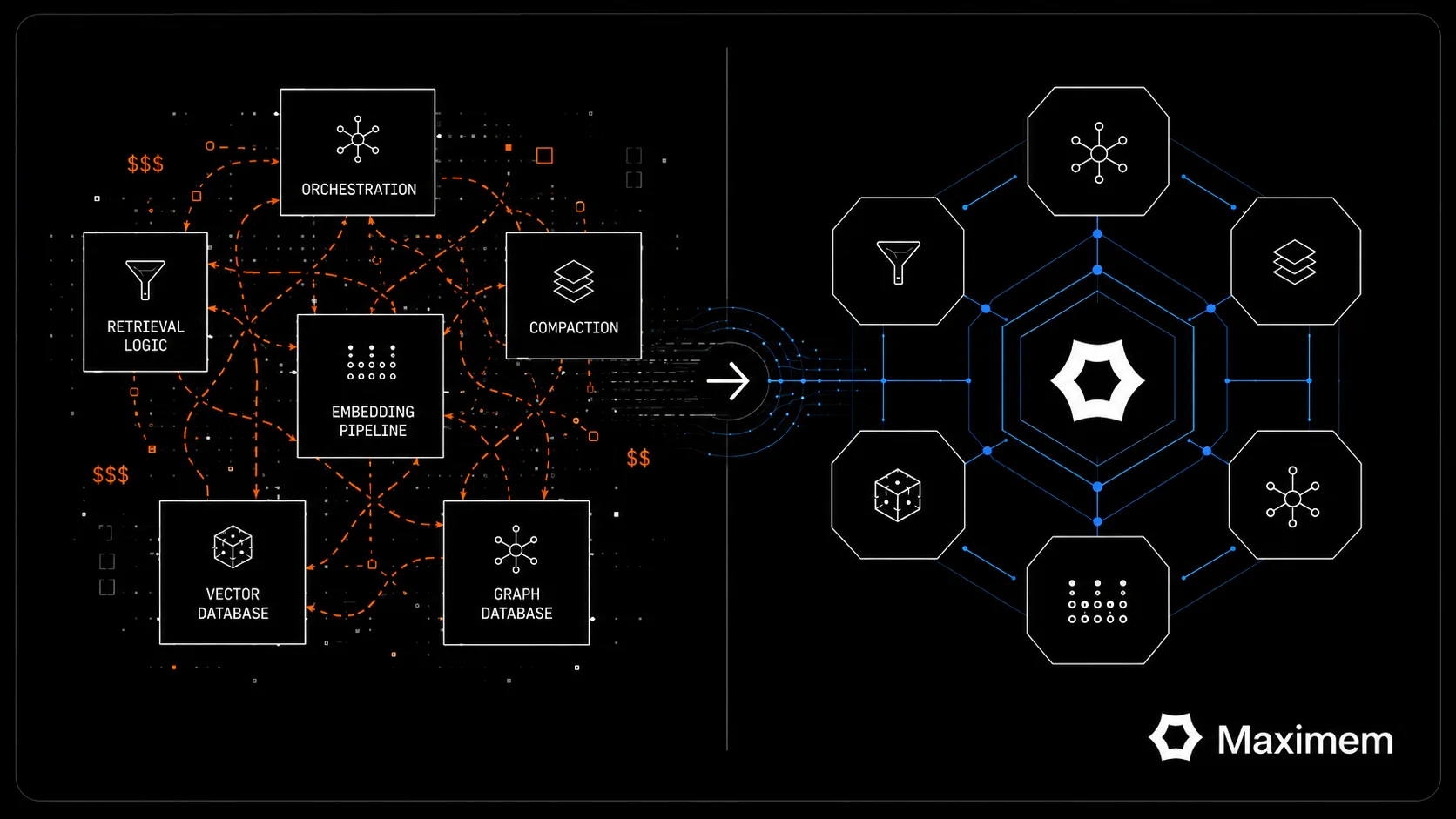

Maximem Vity: Memory That Works the Way You Do

That is what we built. Maximem Vity is a private, secure, cloud vault. It manifests as a Chrome extension and OpenClaw plugin. One installation, no server setup that weaves a single knowledge base from your conversations across ChatGPT, Claude, Gemini, and Perplexity. It also pulls in your Chrome bookmarks and bookmarked X/Twitter content. One unified memory, sitting above every platform.

The core mechanic: when you start typing on any supported AI app, Vity surfaces relevant context from your entire history. Not just from this platform, from all of them. The AI you are talking to gets access to things you figured out in a completely different tool last week.

How This Plays Out in Real Workflows

Let us paint a few pictures, because the concept clicks better with concrete examples.

Take an executive who needs to draft an all-hands memo about a new company initiative. She opens ChatGPT, types one line. Before she hits enter, Vity has already pulled three things from her conversation history: that the team mood right now is ‘cautiously optimistic’ after recent budget cuts, that the memo should name-check the Synergy Project and credit the Innovations Task Force, and that her communication style leans toward ‘Shared Future’ and ‘Visionary Leadership.’ The first draft reads like her comms director wrote it. Not bad for a one-sentence prompt.

Or consider something more technical. A PM on our team was writing a user story for a payments feature in Claude. She barely started typing before Vity had surfaced the team’s standard PRD format (“As A > I Want To > So That,” followed by Definition of Done), the success metric she had settled on a month earlier (15% WAU lift), and the hard constraint that anything new had to integrate with the existing Wallet module. That is three pieces of context from three different prior sessions, stitched together and injected before she finished her prompt.

Engineers see similar benefits. One of our early testers uses Claude heavily for API documentation. His team has specific conventions - snake_case for parameters, a particular markdown heading structure, JSON responses with a ‘status’ field. He used to paste a style guide into every new session. Now Vity handles it. The generated docs match team standards on the first pass.

Even personal tasks benefit. We watched someone plan a business trip to the CMPL Expo in Mumbai and Vity automatically pulled her airline preference (Indigo out of Bangalore), accommodation budget (₹5,000/day), and taste for boutique hotels. When she asked for dinner recommendations near the venue, it knew she wanted modern Indian or European food in a setting quiet enough for a business conversation. All from fragments scattered across earlier chats she’d probably forgotten about.

Why “Active Intelligence” Is the Real Differentiator

If cross-platform reach were the whole story, we would basically be a fancy clipboard manager. That is not what’s happening here.

Every existing memory system - ChatGPT’s, Claude’s, OpenClaw’s - is fundamentally passive. Store a fact, replay it later. Vity does something more ambitious. It evaluates what it captures, looking for patterns and connections across your conversations. An idea you explored in a Perplexity research session three weeks ago might be directly relevant to a problem you are wrestling with in Claude today. Vity spots that link and brings it forward. It is building a synthesis layer - less filing cabinet, more thinking partner.

There is also the forgetting problem, which nobody else is seriously tackling. Your 500th memory shouldn’t have the same weight as your 5th. Last Tuesday’s takeout order, a debugging session for a bug you patched two days later, a one-off meeting venue; these clog up your context if you let them accumulate forever. Vity manages the lifecycle, keeping the signal-to-noise ratio healthy as your knowledge base grows. Stuff that matters stays prominent. Stuff that doesn’t fade gracefully.

Net effect: your AI conversations stop feeling like they’re starting from scratch. They feel like picking up mid-thought with someone who’s actually been paying attention.

The Full Picture

| Capability | ChatGPT | Claude | OpenClaw | Maximem Vity |

| :---- | :---- | :---- | :---- | :---- |

| Platform memory | ✓ | ✓ | ✓ | **✓ (across all)** |

| Cross-LLM memory | ✗ | ✗ | ✗ | **✓** |

| Web / bookmark context | ✗ | ✗ | ✗ | **✓** |

| Active consolidation | ✗ | ✗ | ✗ | **✓** |

| Intelligent forgetting | ✗ | ✗ | ✗ | **✓** |

| User-controlled | Limited | Limited | ✓ | **✓** |

| Works with local AI | ✗ | ✗ | Native | **✓ (via plugin)** |

The Future of AI Memory Is Cross-Platform

Credit where it is due - each of these systems reflects sharp engineering thinking. ChatGPT’s layered injection is elegant and fast. Claude’s retrieval-based approach trades consistency for depth, which makes total sense for complex sessions. OpenClaw’s radical transparency is refreshing if you are tired of black-box cloud systems.

But they all share the same blind spot. They remember you inside their own ecosystem and nowhere else.

That might have been fine two years ago, back when most people had one AI tool they used for everything. It doesn’t reflect reality anymore. The power users we talk to regularly touch three or four LLMs in a given week. Their memory layer can’t live inside any one of those tools - it has to sit above all of them, accumulating context the way your actual brain does as you move between tasks and tools.

That is the bet we are making with Maximem Vity. Not marginally better memory for one AI. A real personal knowledge layer that makes every AI you touch smarter about who you are and what you are trying to get done.

Stop explaining. Start creating.

Try Maximem Vity:

Get it on the Chrome Web Store | OpenClaw

Sources and References

- Ebbinghaus, H. (1885). Memory: A Contribution to Experimental Psychology.

- Murre, J.M.J., Dros, J. (2015). Replication and Analysis of Ebbinghaus’ Forgetting Curve. PLOS ONE.

- Cottrill Research (2025). Survey: Workers Spend Too Much Time Searching for Information.

- Mark, G., Gudith, D., Klocke, U. University of California, Irvine. The Cost of Interrupted Work.

- Asana. The Anatomy of Work Index: Context Switching Costs.

- Gupta, M. (2025). I Reverse Engineered ChatGPT’s Memory System.manthanguptaa.in

- Gupta, M. (2025). I Reverse Engineered Claude’s Memory System.manthanguptaa.in

- Gupta, M. (2025). How Clawdbot Remembers Everything.manthanguptaa.in