Every few months, someone on my team switches their default AI. Last quarter a founder-friend went all-in on Claude after being a ChatGPT loyalist for over a year. Before that, one of our engineers had a brief Gemini phase mostly because of the million-token context window, partly because he wanted to be contrarian about it. Each time, same ritual: spend the first week re-explaining everything. Your writing style. Your stack. That you prefer named exports. The name of your dog. All of it, poof.

We started calling it "digital amnesia" around the office, and honestly it felt a bit dramatic until we started hearing the exact same phrase from users who had nothing to do with us. A PM at a mid-stage startup in San Francisco used those words unprompted on a user research call. So did a solo founder in Toronto. Turns out the experience is so universal that people independently arrive at the same name for it.

This article is about that problem - the memory portability problem - and why I think it is one of the most consequential unsolved issues in how humans work with AI. It is also about where the market is heading, because the last few months have handed us a lot of signal.

AI was not designed to remember

Rewind to 2023. AI tools had no memory at all. You opened ChatGPT, had a conversation, closed the tab, and next time a blank slate greeted you. The entire interaction model was stateless. For simple lookups this was fine. For anything resembling ongoing work it was genuinely maddening, the kind of maddening where you find yourself keeping a Google Doc of "things to remind ChatGPT about" like some kind of deranged onboarding manual for a machine.

Then memory showed up. ChatGPT shipped it around early 2024. Claude followed. The implementations are more different than most people realize. I wrote a detailed technical breakdown a few weeks ago comparing ChatGPT, Claude, and OpenClaw under the hood (https://www.maximem.ai/blog/ai-apps-memory). But the gist is the same: the AI retains something about you between sessions so future responses feel less generic.

This was a big deal. It really was. Suddenly your AI knew you preferred Python over JavaScript, that you were fundraising, that you liked short answers. The before-and-after was noticeable within days.

But it created a new problem. A sneaky one that took a while to become obvious.

When memory becomes a trap

Here is a question I have been asking basically everyone in the AI space for the past six months: how many LLMs do you use regularly?

Nobody says one.

The typical answer is two or three. Often four. Perplexity / Parallel AI / Google Search AI Mode for research, these are just better at citing sources and I have stopped pretending otherwise. ChatGPT for first drafts of long-form content, because the voice controls are good and I have already trained it on my style. Claude for code, for analysis, for anything where I need the model to actually think before it talks. Gemini when I need something grounded in Google's ecosystem, like pulling context from my Drive. Or when I wanted to play around with its super-powerful image-gen capabilities. Manus if I wanted to get something done. Gamma / GenSpark if I really wanted to design a deck for say a talk or a working-session.

This is not a power-user edge case. This is Tuesday for anyone who takes AI seriously.

And yet every single one of these tools stores your memory in its own little silo. What Claude knows about your Q3 OKRs, ChatGPT has no idea about. The research you did in Perplexity on competitor pricing? Invisible to Claude and others. You end up either repeating yourself across tools; which defeats the purpose of memory; or you stop switching, because the AI you have already trained feels "smarter" even when a different model would objectively be better for the task at hand.

That second thing is the subtle danger, and I do not think enough people are talking about it. Memory becomes a moat. Not because the product is superior, but because the product knows you. Knowing you is a form of lock-in that has nothing to do with model quality, benchmark scores, or pricing. It is stickiness masquerading as intelligence.

Anthropic just said the quiet part out loud

Couple of days ago, Anthropic launched something called Import Memory (https://claude.com/import-memory). Dead simple pitch: copy a prompt, paste it into your current AI, get back a summary of what it knows about you, paste that into Claude's memory settings. Done in under a minute.

Three friends sent me the link on launch day. I mentioned this already. It still makes me laugh.

I want to be fair here, because the feature is well-executed. Anthropic identified that accumulated memory was the single biggest barrier to switching -- not model quality, not price, not features -- and they built a one-step bridge. The product instinct behind it is sharp. If I were advising a friend on whether to try Claude and they said "but ChatGPT already knows me," I would tell them to use the import tool. It solves that specific problem cleanly.

But -- and I have been turning this over in my head for days -- it solves the moving-day problem, not the living-in-two-places problem.

A person who imports their ChatGPT context into Claude on Monday will open Perplexity on Tuesday to research something new. They will brainstorm in ChatGPT on Wednesday because old habits. By Thursday, Claude's imported snapshot is already stale. The preferences diverge. The context fragments. You are back to digital amnesia, just with better first-day onboarding.

I am not saying this to dunk on Anthropic. I am saying it because the distinction between memory migration and memory portability is the entire crux of what we are working on, and Claude's import feature is the clearest possible illustration of why the two are different things.

Migration is a one-time event. You move your stuff from apartment A to apartment B.

Portability is a continuous state. Your stuff is everywhere you are, all the time, without you having to think about it.

The market meaning actual humans trying to get work done, needs portability.

What this actually looks like in practice

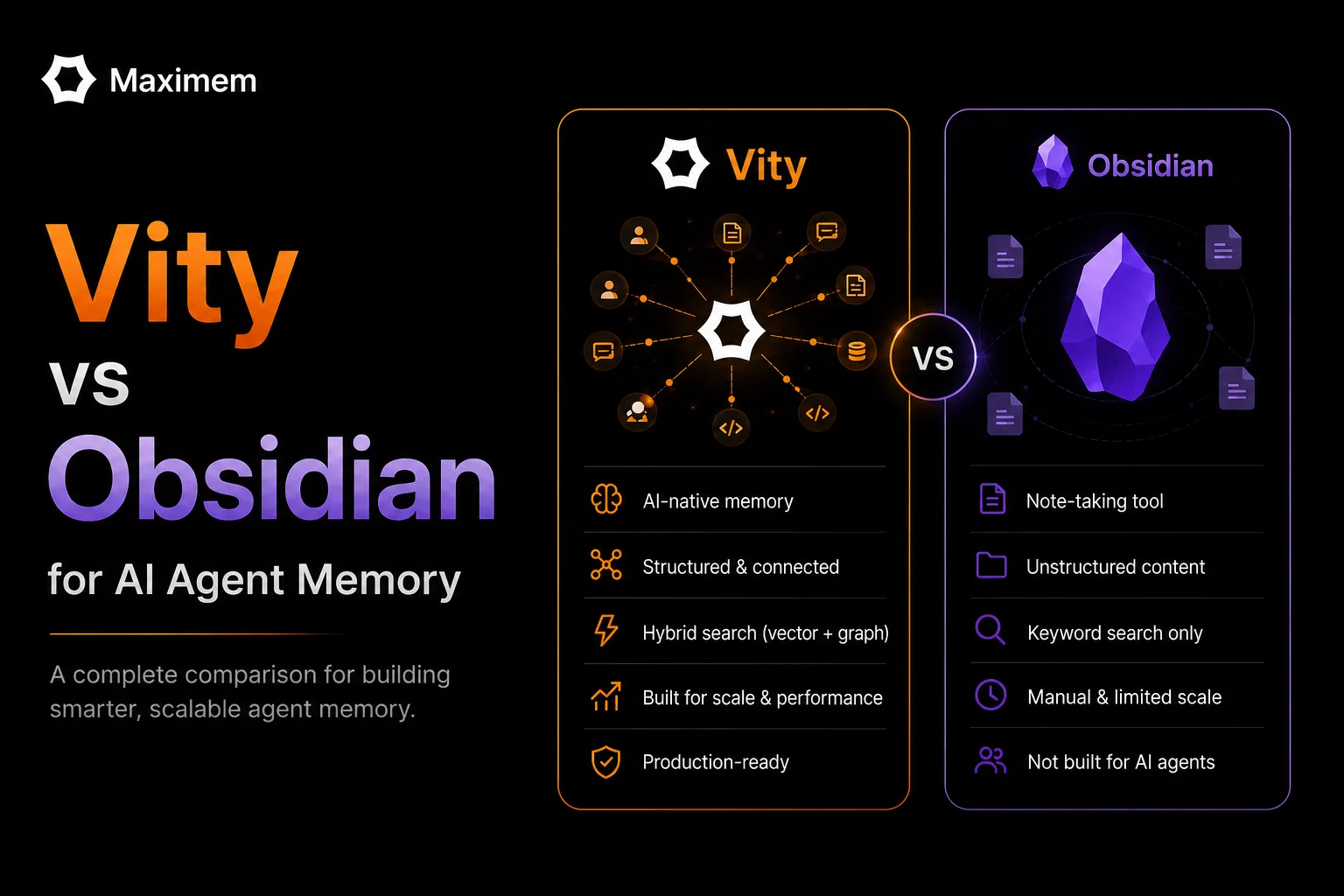

We have been building Vity (https://www.maximem.ai/vity) for this exact problem, and I will try to describe it without sounding like a product page, though fair warning, this is the section where that is hardest to avoid.

You install a Chrome extension. That is it, that is the setup. Vity watches your conversations across ChatGPT, Claude, Gemini, Perplexity -- basically any browser-based AI tool -- and extracts the stuff that matters. Not raw transcripts. The distilled context: your preferences, your active projects, the decisions you have made, the technical choices you have locked in. It stores all of this in an encrypted semantic graph that belongs to you.

Then, when you start a new conversation in any AI tool, Vity checks the vault, finds the relevant memories, and injects them into the conversation context (with 1-click, permission-driven). Automatically. No copy-paste. No "here is what you need to know about me" preamble that everyone has saved in a text file somewhere. Do not pretend you do not.

A user in our early access group - she runs a two-person agency in Mumbai - told us she used to spend the first three messages of every ChatGPT conversation re-establishing project context. "Now it just knows," she said. She had been researching a client's industry in Perplexity an hour earlier, and the relevant context had already carried over to Claude before she typed her first prompt.

Or take the less dramatic but arguably more common case: a developer who uses Claude for code and ChatGPT for documentation. He told us he had been manually copy-pasting function signatures and architectural decisions between the two for months. Months! Vity made that invisible. What he taught Claude about his codebase on Monday was already in ChatGPT's context when he sat down to write docs on Tuesday.

If cross-platform reach were the whole story, we would basically be a glorified clipboard manager. The thing that actually makes this work is the semantic graph. Vity does not just store text, it understands relationships between your memories. Your preference for serverless architecture is connected to your AWS project which is connected to the cost constraints your investor mentioned. When any of those topics come up in any AI tool, the relevant cluster activates. It is not keyword matching. It is contextual recall.

(That last paragraph sounded dangerously close to marketing copy. I know. But it is also just... how it works.)

The privacy thing

I would be dodging something important if I skipped this. A cross-platform memory layer that watches your AI conversations is, by definition, a sensitive piece of infrastructure. A friend who advises a VC fund told me bluntly: "Cool product, terrifying surface area." He is right to flag it.

So here is our answer, and I will be concrete rather than hand-wavy about it. Your memory graph is encrypted at rest and in transit. The encryption keys are yours -- not ours. We architecturally cannot read your memories even if someone showed up with a warrant and a compelling PowerPoint. This is not a policy we pinky-promise to uphold. It is a technical constraint we designed in from day one.

Why does this matter so much? Think about what your AI actually knows about you after a few months of heavy use. Your health questions. Your salary negotiations. Your relationship stuff that you would never type into a search engine but somehow feel okay asking an AI about at 1 AM. Half-formed business ideas. Anxiety about whether your startup is going to make it. This is intimate data -- more intimate than your browser history, more intimate than your email -- and a memory layer that treats it casually is a liability, not a feature.

We take this seriously enough that it shapes our product decisions in ways that are sometimes inconvenient for us. We cannot do server-side analytics on memory content, for instance, because we literally cannot see it. Our usage dashboards are blind to what you are actually storing. That is a real limitation for a startup trying to understand its users, and we chose it anyway.

Where this goes from here

I will close with where I think the next two or three years shake out, understanding that predictions from startup founders about their own market should be taken with appropriate skepticism.

Every major lab is going to ship better memory. That is already happening and it will accelerate. The recall will get sharper, the preference tracking more nuanced, the retrieval faster. Not controversial.

People will use more AI tools, not fewer. The models are specializing -- Claude is becoming the thinking tool, ChatGPT has the broadest consumer surface, Perplexity owns search, Gemini is woven into Google's productivity stack. The fantasy that one AI will "win" and everyone else packs up? I just do not see it. Multi-tool workflows are already the norm and they are going to become more entrenched as each platform leans harder into what it does best.

And here is the piece I think people are underestimating: a year from now, the sum total of what your AI tools collectively know about you will be one of the most valuable digital assets you own. Not in a crypto-bro "tokenize your data" way -- in a practical, daily-productivity way. The person who has a unified memory layer across all their AI tools will get meaningfully better outputs than the person who does not. The gap will be obvious and it will compound over time.

We are building the infrastructure for that future. Not because Claude's import feature validated our thesis -- though the timing was pretty nice, I will not lie. But because the fragmented-memory problem only gets worse as AI tools multiply, and as far as I can tell, nobody else is building the connective tissue between them.

If you use more than one AI and you are tired of the re-explaining ritual, that is the problem Vity exists to solve. We are live on the Chrome Web Store. Come see if it clicks for you.

And if you are a developer building AI-powered tools and want your users to benefit from persistent, cross-platform memory without building the whole stack yourself -- that is what Synap, our developer SDK, is for. Same vault. Different interface.

I am the founder of Maximem. We are building the private memory layer for AI. Find us at https://www.maximem.ai. I previously wrote a technical comparison of how ChatGPT, Claude, and OpenClaw implement memory at https://www.maximem.ai/blog/ai-apps-memory.