Image Processing for AI Agents: Embeddings, Vision Models, and When to Use Each

You're staring at 10,000 product photos. Your agent needs to work with them. But you're facing an impossible choice: send them all through a vision model and watch your budget evaporate, or use embeddings and lose the reasoning you desperately need.

This isn't actually a binary choice. Here's why.

I learned this the hard way. I spent $8,400 last year sending 15,000 images through Claude vision, then realized I'd been solving the wrong problem entirely. I'd picked one tool and hoped it covered everything. It didn't.

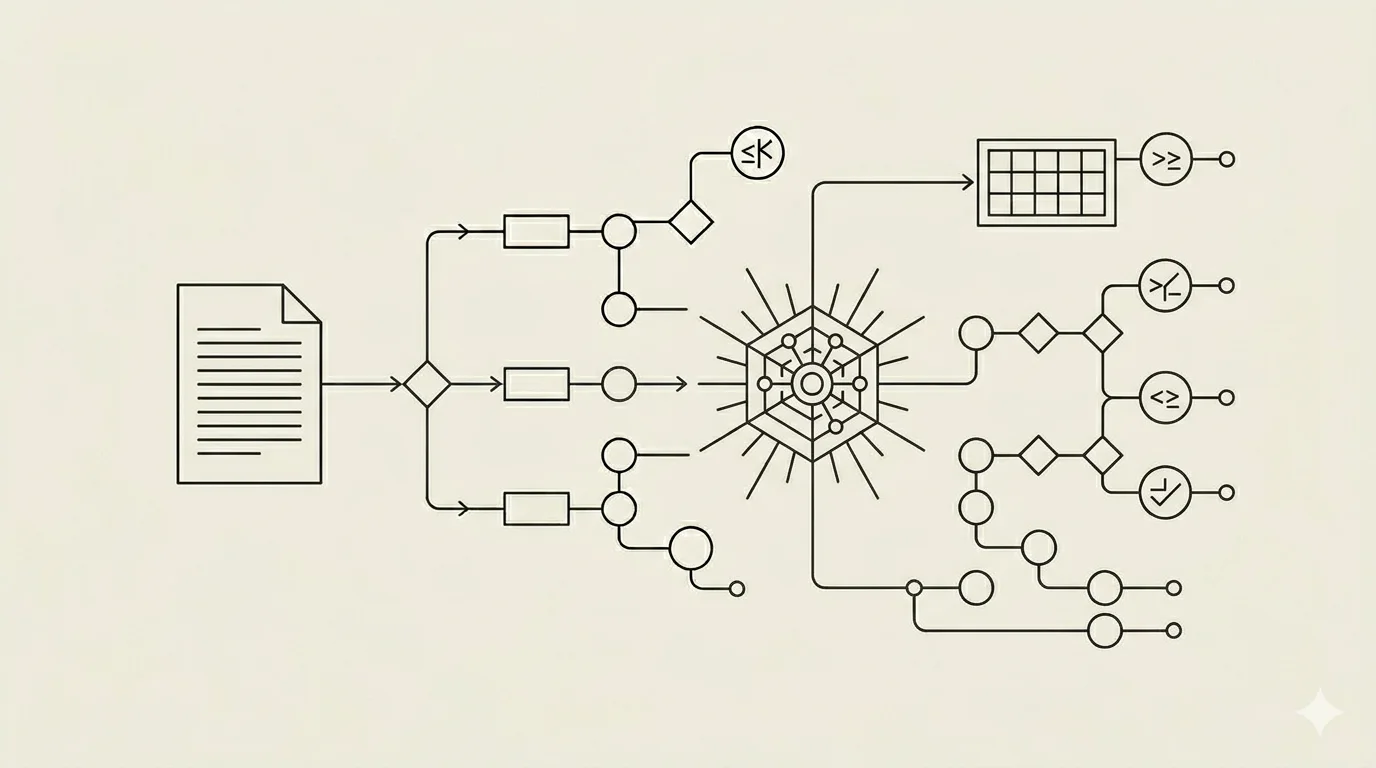

Two Approaches, Built for Different Jobs

Approach A: Direct Vision Model Processing

You send the image directly to Claude vision, GPT-4V, Gemini, or whichever multimodal model you're using. The model sees the image and reasons about it in language.

Use this when you need the agent to understand, describe, analyze, or extract specific information. "What does this screenshot show?" "Extract the table from this receipt." "Is this product photo compliant with our brand guidelines?" This is analysis. This is reasoning. The vision model walks through what it observes, layer by layer, and returns structured output.

The advantage runs deep. Full visual understanding. The model captures context, layout, color, spatial relationships, text within the image. It doesn't just say "this is a photo." It explains what's happening and why. Claude vision costs $3 per million input tokens and $15 per million output tokens—images get counted as tokens. GPT-4V and GPT-5.1 include vision natively. Gemini is multimodal with a context window large enough for multiple images at once.

The disadvantage is cost. Per-image expense. Scale becomes a problem fast. Run 10,000 images through vision models and your budget vaporizes. This is exactly what happened to me.

Approach B: Image Embeddings for Search and Retrieval

You convert images into vector representations using embedding models. The standard picks for general-purpose work are OpenAI CLIP and SigLIP 2—they create a shared text-image vector space, which means you can search text against images and images against images in the same database. EVA-CLIP is another solid option. BLIP-2 works well if you need both captioning and embeddings. Cohere Embed v3 is the commercial API option if you'd rather not host the model yourself.

Store those vectors in a vector database: Pinecone, Qdrant, Weaviate, or Milvus. All of these support image embeddings natively and let you store image and text embeddings in the same index. Now you can search by similarity. "Find the 5 most similar product images to this one." The database returns matches in milliseconds.

Use embeddings when you're searching, filtering, or matching across a large collection. Your agent isn't analyzing one image. It's finding the right images from hundreds or thousands at once.

Speed. Scale. Cost efficiency for bulk operations. The tradeoff is that embeddings lose fine-grained visual detail. They can't reason about what's in an image. They can only say "this is similar to that."

The Hybrid Pattern (This Is What Actually Works)

Most production systems that work well use both approaches together. Here's the real workflow:

Step 1: Use image embeddings to search and narrow down. You have 10,000 product photos in your catalog. Vector search retrieves the top 5 most similar to what your customer described. The operation takes about 40 milliseconds.

Step 2: Send those 5 images to a vision model for detailed analysis. "Which of these matches the customer's actual description?" Now the vision model isn't processing thousands. It's reasoning about a handful. The model sees nuance because it's focused.

This gives you cost efficiency. You pay for vision model calls on a small subset. Speed comes from vector search in milliseconds. Quality emerges because reasoning happens where it matters most. A team at an e-commerce platform I worked with combined this pattern with their product catalog and reduced image analysis costs by 87 percent in three months. Same analyses they'd always run—just smarter routing through the system.

When should you do this? The breakeven lands somewhere around 100 to 200 images. Below that, process everything through a vision model. The API costs are lower. Latency is acceptable. Above that threshold, embeddings for search first becomes the obvious choice.

This pattern also enables multimodal RAG. It's the image equivalent of text RAG. You embed images and text in the same vector space, search across both modalities, and send relevant results to the vision model for reasoning. LlamaIndex and LangChain support this natively now. A customer asks "find me shoes that look like this" and says "I want something under $100 and in blue"—you search both the image embeddings and text embeddings in the same database and get back results that match both constraints.

Tools, Models, and MCP Servers

For preprocessing images before you embed or analyze them, ImageSorcery is the one to know. It's open-source, runs locally, and uses OpenCV and Ultralytics under the hood. Object detection, OCR, resizing, content-based search. Useful for cleaning up images before they go to embedding models or vision models. OpenCV MCP Server exists if you need custom image pipelines built on pure OpenCV.

Vector databases have matured significantly. Pinecone, Qdrant, Weaviate, and Milvus all support image embeddings natively. You can store image and text embeddings in the same index and search across both modalities. This is what enables the multimodal RAG workflows.

Vision model pricing varies. Claude vision: $3 per million input tokens and $15 per million output tokens (images are counted as tokens). GPT-4V and GPT-5.1 have vision built in and price per token like any other model call. Gemini is multimodal with a context window large enough for multiple images at once, which changes the equation if you're analyzing sets of related images together.

The Decision Framework

Here's the simplest way to think about it:

I need to analyze or understand a single image → Send directly to vision model. Lower cost than building infrastructure. Acceptable latency. Reasoning is the goal.

I need to search across 100+ images → Embed and use vector search. The filtering happens cheaply at scale. Then optionally send the top candidates to a vision model for reasoning.

I need to search, then analyze → Hybrid approach. Embeddings narrow the field fast. Vision model does the detailed reasoning on a small subset.

I need text and image search together → Multimodal RAG. Put text and image embeddings in the same vector space. Search both simultaneously.

I need to preprocess images first → ImageSorcery MCP or OpenCV MCP. Clean, detect objects, extract text, then either embed or send to a vision model.

Pick the row that matches your problem. Follow it.

The 2026 consensus is clear on this. Don't vectorize what you can reason about directly. Don't run expensive vision models on what you can search with embeddings. The hybrid approach wins for most production systems because it respects both the strengths and constraints of each tool.

Start with one question: Am I searching or reasoning? Then the right architecture follows naturally. Everything else is implementation detail.