I

Voice Agent Stack: The Right Tools for Production Voice AI in 2026

I built my first voice agent last year and learned something fast: picking the wrong foundation turns $100 into $300+ per minute in costs. Or worse, builds a system that sounds like a robot. The problem isn't the individual layers—speech-to-text is solved, text-to-speech is solved, LLM reasoning is solved. The hard part is threading them together without adding latency overhead that kills the conversation.

I'll walk you through what I've tested, what's working in production, and the tradeoffs nobody talks about.

Why Voice Is Its Own Beast

Text-based AI systems have a luxury: time. Users don't mind waiting 3 seconds for a thoughtful response. Voice is different. A caller expects to hear something within 300 to 500 milliseconds. That's not my number—it's what human conversation expects. Faster than that and it sounds unnatural. Slower and the person on the other end starts to wonder if the line dropped.

Here's what runs in series: your speech-to-text system processes audio (150ms). Your LLM generates a response (300ms). Your text-to-speech engine turns it into audio (100ms). That's 550ms before your network stack even gets involved. Add network latency and you're already cutting it close on that 500ms window.

This architecture constraint changes everything. You can't just plug tools together and hope. Every millisecond compounds. Pick the wrong speech-to-text provider and nothing else matters—your latency budget is already gone.

The Platform Question: Build vs. Buy

There are two roads here. One: use an integrated platform and ship in weeks. Two: assemble your own stack and own the optimization but deal with four vendors at 2 AM when something breaks.

Integrated Platforms (Ship Fast)

ElevenLabs is the premium play. Their Scribe v2 Realtime STT runs at sub-150ms. Flash TTS delivers 75ms to first audio—the fastest on the market. The voice quality is clean, the infrastructure is solid, and the product is polished. But you're locked in. You can't swap Deepgram for speech-to-text or use Cartesia for TTS instead. You take the whole package.

The teams that win with ElevenLabs are usually optimizing for quality first. They want to ship a voice agent that sounds professional, don't care about shaving milliseconds, and would rather have one vendor to call when something breaks. Larger organizations and companies where voice quality is the whole product fit here.

Retell AI starts at $0.07 per minute and markets itself around conversation orchestration. The latency is solid, not exceptional. The voice quality is respectable. But what actually works here is something different: Retell lets your voice agent hook directly into your business systems during the call. Your agent answers the customer, pulls their account from Salesforce, books an appointment in their calendar, all while the conversation is happening. Retell handles the integrations you'd normally stitch together yourself.

This matters for mid-market teams. You're not trying to compete on latency with ElevenLabs. You're trying to build an agent that does something on behalf of your business. Retell's architecture assumes that's your goal.

Modular Platforms (Flexibility First)

Vapi is the opposite philosophy. They're not a platform—they're glue. You choose your STT provider (Deepgram, ElevenLabs, whatever), your LLM (Claude, GPT, whatever), your TTS (Cartesia, Inworld, your pick), and your telecom stack. Vapi orchestrates the connections between them.

The advantage: total control. You want the cheapest STT, the best-sounding TTS, and the fastest LLM? Vapi doesn't care—you build it. The disadvantage: complexity. When your system degrades at 2 AM, you're now calling Deepgram support, your LLM provider, and Vapi all in one go. You've traded speed-to-ship for speed-to-scale. Teams with specific vendor requirements live here. Everyone else usually regrets it.

The Speed Player: Cartesia

Cartesia obsesses over latency and cost. Sonic Turbo delivers 40ms to first audio—fastest TTS shipping. Sonic-3 runs 90ms at one-fifth ElevenLabs' price. Their STT is $0.13 per hour, the cheapest streaming option I've tested. But the real differentiator is something else: their Sonic models express emotion. They laugh, get frustrated, shift tone. No other streaming TTS does this at scale.

If you're building for India or Southeast Asia, Cartesia supports nine Indic languages. That's not a nice-to-have if your market is there—it's table stakes. A voice agent that can't inflect naturally in Hindi or code-switch between Tamil and English isn't really an agent in that context.

Cost-sensitive teams that won't compromise on speed or expressiveness start here. You'll spend maybe $0.02-0.05 per minute all-in, which is less than a third of ElevenLabs. The tradeoff: you're with a newer vendor and you're betting on their infrastructure scaling with you.

Indian Market: Entirely Different Game

If you're shipping to India or serving Indian diaspora, native language support isn't optional. Two platforms own this space completely.

Sarvam AI built a full-stack sovereign platform for India. STT, TTS, translation. Eleven Indian languages plus Indian English. They stream via WebSocket and price at roughly ₹30-45 per hour (about $0.01 per minute all-in). If you're building for India, this is your baseline. Everyone else charges more or supports fewer languages. This is what you measure against.

Gnani built Inya VoiceOS, which breaks the traditional STT-LLM-TTS pipeline entirely. Audio in, audio out. Direct processing. Sub-second response times with natural prosody. They handle something most platforms can't: code-mixed speech. Hindi-English mixing. Tamil-English. Real-world speech patterns that don't fit Western phonetics. Zero-shot voice cloning from ten seconds of reference audio.

The choice here depends on your requirements. Sarvam if you need reliability and breadth across Indian languages. Gnani if you need to support actual Indian English speakers with natural prosody and code-mixing.

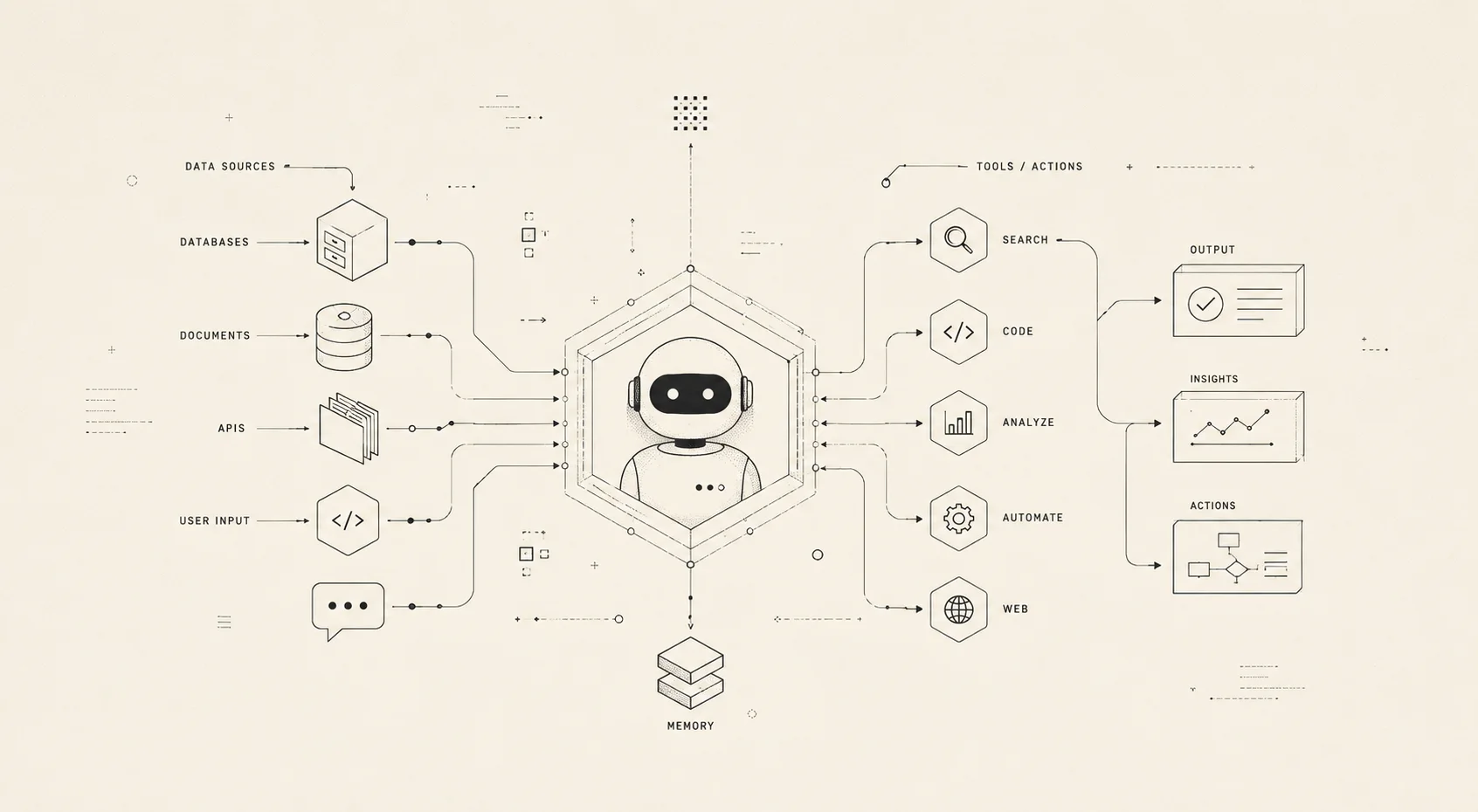

Agent Frameworks and Orchestration

Once you've picked your voice stack, you need something to orchestrate the agent's reasoning. Three frameworks dominate for voice-first systems:

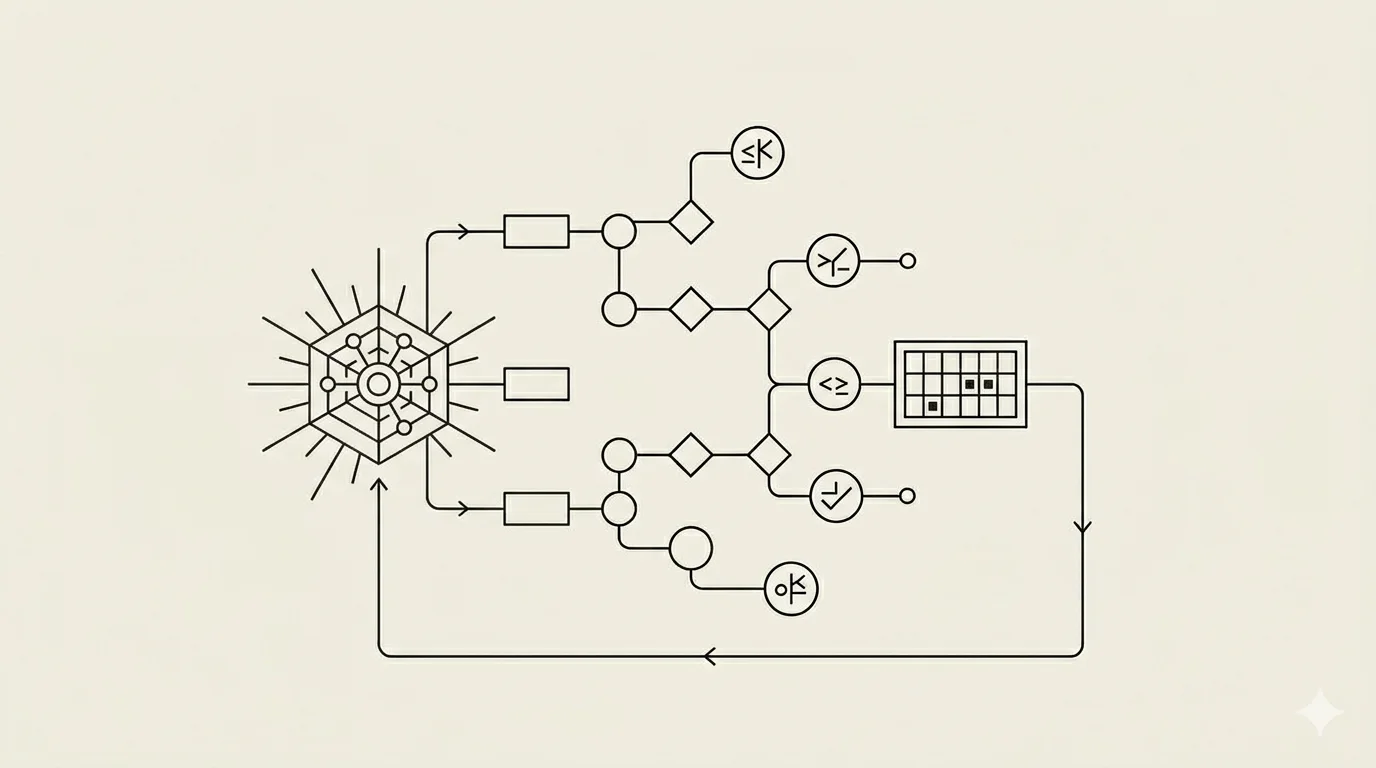

CrewAI is built for multi-agent scenarios. You define agents (each with a role, goal, and backstory), assign them tools, and CrewAI coordinates the conversation flow. For voice systems, this matters because your agent might need to route to a specialist mid-conversation. CrewAI handles that routing cleanly. The framework integrates with all major LLMs and voice APIs. It's Python-first.

AutoGen (from Microsoft) takes a different approach: flexible conversation patterns. Two or more agents exchange messages until they solve a problem. You define the agents, their capabilities, and when they're done. AutoGen doesn't assume a hierarchy. That's useful for voice because you might have a moderator agent, a reasoning agent, and a tool-calling agent working in parallel. The conversation flow feels more natural because it's not strictly linear.

Swarms optimizes for dynamic agent routing. You create a swarm of specialized agents and define rules for when each one should activate. The framework figures out which agents to use based on the conversation context. For voice, this is particularly useful because you can have high-latency specialist agents (like a research agent that calls external APIs) and low-latency responder agents (that generate immediate responses). The swarm routes accordingly.

Which one you pick depends on your team's preference and your agent's complexity. Simple voice agents don't need orchestration—a single LLM with tools is fine. Complex multi-agent scenarios that span domains (customer support + billing + escalation) benefit from structured orchestration.

Bringing It Together: A Production Voice Agent Stack

Here's what a solid 2026 voice agent looks like:

Speech-to-Text: Deepgram (if cost-conscious and latency-focused) or ElevenLabs Scribe (if you want integrated quality).

LLM: Claude via API (or GPT-4 if you need specific capabilities). No embedded models. Real-time latency demands cloud inference.

Text-to-Speech: Cartesia for cost and emotion, ElevenLabs for polished quality, Sarvam if targeting India.

Orchestration: CrewAI if you have multiple agents. A single agent with tools doesn't need orchestration overhead.

Telephony: Vapi if you want flexibility across STT/TTS providers. Retell if you're building business integrations. ElevenLabs if you're optimizing for quality.

Context Management: Synap. Your agent needs to remember conversation history, customer preferences, account details. Raw conversation logs cause hallucination and irrelevant tool calls. Structured context from Synap changes the conversation quality entirely.

This stack costs roughly $0.04-0.08 per minute all-in (depending on your LLM provider and voice quality choices). It supports multi-language, handles edge cases, and scales.

Get started: Vapi documentation | Deepgram API reference

Read the docs: Synap Docs | CrewAI Framework

Related posts

Why AI Forgets: Why ChatGPT, Claude, and Gemini Don't Remember You Well May 10, 2026

Image Processing for AI Agents: Embeddings & Vision Models & When to Use Each May 15, 2026

MCP Servers Explained: What They Are and How AI Agents Use Them March 27, 2026